by pressing the Bookmarks tab on the left side of this window.

*****************************************************

We are the last.

The last generation to be unaugmented.

The last generation to be intellectually alone.

The last generation to be limited by our bodies.

We are the first.

The first generation to be augmented.

The first generation to be intellectually together.

The first generation to be limited only by our imaginations.

We stand both before and after, balancing on the razor edge of the Event

Horizon of the Singularity. That this sublime juxtapositional tautology

has gone unnoticed until now is itself remarkable.

We're so exquisitely privileged to be living in this time, to be born

right on the precipice of the greatest paradigm shift in human history,

the only thing that approaches the importance of that reality is finding

like minds that realize the same, and being able to make some connection

with them.

If these books have influenced you the same way that they have us, we

invite your contact at the email addresses listed below.

Enjoy,

Michael Beight, piman_314@yahoo.com

Steven Reddell, cronyx@gmail.com

Here are some new links that we’ve found interesting:

KurzweilAI.net

News articles, essays, and discussion on the latest topics in

technology and accelerating intelligence.

SingInst.org

The Singularity Institute for Artificial Intelligence: A

think tank devoted to increasing Humanity’s odds of

experiencing a safe, beneficial Singularity. Many

interesting articles on such topics as Friendly AI,

Existential Risks.

SingInst.org/Media

Videos, audio, and PowerPoints from the Singularity Summits;

and videos about SIAI’s purpose.

blinkx.com/videos/kurzweil

Videos on the internet in which the word “Kurzweil” is

spoken. Great new resource!

[Inside Jacket]:

U.S. $25.95

(continued from front flap)

C A N A D A $ 3 6 . 9 9

I

out brains along direct neural pathways;

magine a world where the difference

computers, for their part, will have read all

the world’s literature. The distinction

between man and machine blurs, where the

between us and computers will have

difference between humanity and

become sufficiently blurred that when the

technology fades, where the soul and the

machines claim to be conscious, we will

silicon chip unite. This is not science fiction.

believe them.

This is the twenty-first century according to

Ray Kurzweil, the “restless genius” (Wall

In The Age Of Spiritual Machines, the

Street Journal) and inventor of the most

“ultimate thinking machine” (Forbes) forges

innovative and compelling technology of our

the ultimate road to the next century.

era. In The Age of Spiritual Machines, the

brains behind the Kurzweil Reading

Machine, the Kurzweil Synthesizer,

advanced speech recognition, and other

technologies devises a framework for

envisioning the next century. In his inspiring

hands, life in the new millennium no longer

seems daunting. Instead, Kurzweil’s twenty-

first century promises to be age in which the

marriage of human sensitivity and artificial

Ray Kurzwe il

intelligence fundamentally alters and

is the author of The Age of Intelligent

improves the way we live.

Machines, which won the Association

American Publishers’ Award for The Most

The Age of Spiritual Machines is no mere list

Outstanding Computer Science Book of

of predictions but a prophetic blueprint for

1990. He was awarded the Dickson Prize,

the future. Kurzweil guides us through the

Carnegie Mellon’s top science prize, in 1994.

inexorable advances that will results in

The Massachusetts Institute of Technology

computers exceeding the memory capacity

named him the Inventor of the Year in 1988.

and computational ability of the human

He is the recipient of nine honorary

brain. According to Kurzweil, machines will

doctorates and honors from two U.S.

achieve all this by 2020, with human

presidents. Kurzweil lives in a suburb of

attributes not far behind. We will begin to

Boston.

have relationships with automated

JACKET DESIGN BY DAVID J. HIGH

personalities and use them as teachers,

AUTHOR PHOTOGRAPH BY JERRY BAUER

companions, and lovers. A mere ten years

later, information will be fed straight into

A Member of Penguin Putnam Inc

375 Hudson Street, New York, N.Y. 10014

http://www.penguinputnam.com

Printed in U.S.A.

(continued on back flap) 0199

THE AGE OF

SPIRITUAL

MACHINES

ALSO BY RAY KURZWEIL

The 10% Solution for a Healthy Life

The Age of Intelligent Machines

THE AGE OF

SPIRITUAL

MACHINES

WHEN COMPUTERS EXCEED HUMAN INTELLIGENCE

RAY KURZWEIL

VIKING

Published by the Penguin Group

Penguin Putnam Inc., 375 Hudson Street,

New York, New York 10014, U.S.A.

Penguin Books Ltd, 27 Wrights Lane, London W8 5TZ, England

Penguin Books Australia Ltd., Ringwood, Victoria, Australia

Penguin Books Canada Ltd., 10 Alcorn Avenue,

Toronto, Ontario, Canada M4V 3B2

Penguin Books (N.Z.) Ltd, 182–190 Wairau Road,

Auckland 10, New Zealand

Penguin India, 210 Chiranjiv Tower, 43 Nehru Place,

New Delhi 11009, India

Penguin Books Ltd, Registered Offices:

Harmondsworth, Middlesex, England

First Published in 1999 by Viking Penguin,

a member of Penguin Books Inc.

1 3 5 7 9 10 8 6 4 2

Copyright © Ray Kurzweil, 1999

All rights reserved

Illustrations credits

Pages 24, 26–27, 104, 156: Concept and text by Ray Kurzweil. Illustration by Rose Russo and Robert Brun.

Page 72: © 1977 by Sidney Harris

Pages 167–168: Paintings by Aaron, a computerized robot built and programmed by Harold Cohen.

Photographed by Becky Cohen.

Page 188: Roz Chast © 1998. From The Cartoon Bank. All rights reserved.

Page 194: Danny Shanahan © 1994. From The New Yorker Collection. All rights reserved.

Page 219: Peter Steiner © 1997. From The New Yorker Collection. All rights reserved.

LIBRARY OF CONGRESS CATALOGING IN PUBLICATION DATA

Kurzweil, Ray.

The age of spiritual machines / Ray Kurzweil.

p. cm.

Includes bibliographical references and index.

ISBN 0‐670‐88217‐8

1. Artificial Intelligence. 2. Computers. 3. Title.

Q335.K88 1999

006.3—dc21 98–38804

This book is printed on acid‐free paper.

Printed in the United States of America

Set in Berkeley Oldstyle

Without limiting the rights under copyright reserved above, no part of this publication may be

reproduced, stored in or introduced into a retrieval system, or transmitted, in any form or by any means

(electronic, mechanical, photocopying, recording or otherwise), without the prior written

permission of both the copyright owner and the above publisher of this book.

A NOTE TO THE READER

As a photon wends its way through an arrangement of glass panes and mirrors, its path remains ambiguous. It essentially takes every possible path available to it (apparently these photons have not read Robert Frostʹs poem ʺThe Road Not Takenʺ). This ambiguity remains until observation by a conscious observer forces the particle to decide which path it had taken. Then the uncertainty is resolved—retroactively—and it is as if the selected path had been taken all along.

Like these quantum particles, you—the reader—have choices to make in your path through this book. You can read the chapters as I intended them to be read, in sequential order. Or, after reading the Prologue, you may decide that the future canʹt wait, and you wish to immediately jump to the chapters in Part III on the twenty‐first century (the table of contents on the next pages offers a description of each chapter). You may then make your way back to the earlier chapters that describe the nature and origin of the trends and forces that will manifest themselves in this coming century. Or, perhaps, your course will remain ambiguous until the end. But when you come to the Epilogue,

any remaining ambiguity will be resolved, and it will be as if you had always intended to read the book in the order that you selected.

CONTENTS

A NOTE TO THE READER

9

ACKNOWLEDGEMENTS

13

PROLOGUE: AN INEXORABLE EMERGENCE

14

Before the next century is over, human beings will no longer be the most intelligent or capable type of entity

on the planet. Actually, let me take that back. The truth of that last statement depends on how we define

human.

PART ONE: PROBING THE PAST

CHAPTER ONE: THE LAW OF TIME AND CHAOS

19

For the past forty years, in accordance with Moore's Law, the power of transistor-based computing has been

growing exponentially. But by the year 2020, transistor features will be just a few atoms thick, and Moore's

Law will have run its course. What then? To answer this critical question, we need to understand the

exponential nature of time.

CHAPTER TWO: THE INTELLIGENCE OF THE UNIVERSE

40

Can an intelligence create another intelligence more intelligent than itself? Are we more intelligent than the

evolutionary process that created us? In turn, will the intelligence that we are creating come to exceed that of

its creator?

CHAPTER THREE: OF MIND AND MACHINES

47

"I am lonely and bored, please keep me company." If your computer displayed this message on its screen, would that convince you that it is conscious and has feelings? Before you say no too quickly, we need to

consider how such a plaintive message originated.

CHAPTER FOUR: A NEW FORM OF INTELLIGENCE ON EARTH

56

Intelligence rapidly creates satisfying, sometimes surprising plans that meet an array of constraints. Clearly,

no simple formula can emulate this most powerful of phenomena. Actually, that's wrong. All that is needed to

solve a surprisingly wide range of intelligent problems is exactly this: simple methods combined with heavy

doses of computation, itself a simple process.

CHAPTER FIVE: CONTEXT AND KNOWLEDGE

71

It is sensible to remember today's insights for tomorrow's challenges. It is not fruitful to rethink every

problem that comes along. This is particularly true for humans, due to the extremely slow speed of our

computing circuitry.

PART TWO: PREPARING THE PRESENT

CHAPTER SIX: BUILDING NEW BRAINS . . .

78

Evolution has found a way around the computational limitations of neural circuitry. Cleverly, it has created

organisms who in turn invented a computational technology a million times faster than carbon-based

neurons. Ultimately, the computing conducted on extremely slow mammalian neural circuits will be ported to

a far more versatile and speedier electronic (and photonic) equivalent.

CHAPTER SEVEN: . . . AND BODIES

98

A disembodied mind will quickly get depressed. So what kind of bodies will we provide for our twenty-first-

century machines? Later on, the question will become: What sort of bodies will they provide for themselves?

CHAPTER EIGHT: 1999

114

If all the computers in 1960 stopped functioning, few people would have noticed. Circa 1999 is another matter.

Although computers still lack a sense of humor, a gift for small talk, and other endearing qualities of human

thought, they are nonetheless mastering an increasingly diverse array of tasks that previously required

human intelligence.

PART THREE: TO FACE THE FUTURE

CHAPTER NINE: 2009

137

It is now 2009. A $1,000 personal computer can perform about a trillion calculations per second. Computers

are imbedded in clothing and jewelry. Most routine business transactions take place between a human and a

virtual personality. Translating telephones are commonly used. Human musicians routinely jam with

cybernetic musicians. The neo-Luddite movement is growing.

CHAPTER TEN: 2019

146

A $1,000 computing device is now approximately equal to the computational ability of the human brain.

Computers are now largely invisible and are embedded everywhere. Three-dimensional virtual-reality

displays, embedded in glasses and contact lenses, provide the primary interface for communication with other

persons, the Web, and virtual reality. Most interaction with computing is through gestures and two-way

natural-language spoken communication. Realistic all-encompassing visual, auditory, and tactile

environments enable people to do virtually anything with anybody regardless of physical proximity. People

are beginning to have relationships with automated personalities as companions, teachers, caretakers, and

lovers.

CHAPTER ELEVEN: 2029

161

A $1,000 unit of computation has the computing capacity of approximately one thousand human brains.

Direct neural pathways have been perfected for high-bandwidth connection to the human brain. A range of

neural implants is becoming available to enhance visual and auditory perception and interpretation, memory,

and reasoning. Computers have read all available human- and machine-generated literature and multimedia

material. There is growing discussion about the legal rights of computers and what constitutes being human.

Machines claim to be conscious and these claims are largely accepted.

CHAPTER TWELVE: 2099

173

There is a strong trend toward a merger of human thinking with the world of machine intelligence that the

human species initially created. There is no longer any clear distinction between humans and computers.

Most conscious entities do not have a permanent physical presence. Machine-based intelligences derived from

extended models of human intelligence claim to be human. Most of these intelligences are not tied to a

specific computational processing unit. The number of software-based humans vastly exceeds those still using

native neuron-cell-based computation. Even among those human intelligences still using carbon-based

neurons, there is ubiquitous use of neural-implant technology that provides enormous augmentation of

human perceptual and cognitive abilities. Humans who do not utilize such implants are unable to

meaningfully participate in dialogues with those who do. Life expectancy is no longer a viable term in relation

to intelligent beings.

EPILOGUE: THE REST OF THE UNIVERSE REVISITED

191

Intelligent beings consider the fate of the universe.

TIME LINE

196

HOW TO BUILD AN INTELLIGENT MACHINE IN THREE EASY PARADIGMS

213

GLOSSARY

227

NOTES

241

SUGGESTED READINGS

263

WEB LINKS

282

INDEX [Omitted]

289

ACKNOWLEDGMENTS

I would like to express my gratitude to the many persons who have provided inspiration, patience, ideas, criticism, insight, and all manner of assistance for this project. In particular, I would like to thank:

• My wife, Sonya, for her loving patience through the twists and turns of the creative process

• My mother for long engaging walks with me when I was a child in the Woods of Queens (yes, there were

forests in Queens, New York, when I was growing up) and for her enthusiastic interest in and early

support for my not‐always‐fully‐baked ideas

• My Viking editors, Barbara Grossman and Dawn Drzal, for their insightful guidance and editorial

expertise and the dedicated team at Viking Penguin, including Susan Petersen, publisher; Ivan Held and

Paul Slovak, marketing executives; John Jusino, copy editor; Betty Lew, designer; Jariya Wanapun,

editorial assistant, and Laura Ogar, indexer

• Jerry Bauer for his patient photography

• David High for actually devising a spiritual machine for the cover My literary agent, Loretta Barrett, for

helping to shape this project

• My wonderfully capable researchers, Wendy Dennis and Nancy Mulford, for their dedicated and

resourceful efforts, and Tom Garfield for his valuable assistance

• Rose Russo and Robert Brun for turning illustration ideas into beautiful visual presentations

• Aaron Kleiner for his encouragement and support

• George Gilder for his stimulating thoughts and insights

• Harry George, Don Gonson, Larry Janowitch, Hannah Kurzweil, Rob Pressman, and Mickey Singer for

engaging and helpful discussions on these topics

• My readers: Peter Arnold, Melanie Baker‐Futorian, Loretta Barrett, Stephen Baum, Bryan Bergeron, Mike

Brown, Cheryl Cordima, Avi Coren, Wendy Dennis, Mark Dionne, Dawn Drzal, Nicholas Fabijanic, Gil

Fischman, Ozzie Frankell, Vicky Frankell, Bob Frankston, Francis Ganong, Tom Garfield, Harry George,

Audra Gerhardt, George Gilder, Don Gonson, Martin Greenberger, Barbara Grossman, Larry Janowitch,

Aaron Kleiner, Jerry Kleiner, Allen Kurzweil, Amy Kurzweil, Arielle Kurzweil, Edith Kurzweil, Ethan

Kurzweil, Hannah Kurzweil, Lenny Kurzweil, Missy Kurzweil, Nancy Kurzweil, Peter Kurzweil, Rachel

Kurzweil, Sonya Kurzweil, Jo Lernout, Jon Lieff, Elliot Lobel, Cyrus Mehta, Nancy Mulford, Nicholas

Mullendore, Rob Pressman, Vlad Sejnoha, Mickey Singer, Mike Sokol, Kim Storey, and Barbara Tyrell for

their compliments and criticisms (the latter being the most helpful) and many invaluable suggestions

• Finally, all the scientists, engineers, entrepreneurs, and artists who are busy creating the age of spiritual

machines.

PROLOGUE:

AN INEXORABLE EMERGENCE

The gambler had not expected to be here. But on reflection, he thought he had shown some kindness in his time. And

this place was even more beautiful and satisfying than he had imagined. Everywhere there were magnificent crystal

chandeliers, the finest handmade carpets, the most sumptuous foods, and, yes, the most beautiful women, who seemed intrigued with their new heaven mate. He tried his hand at roulette, and amazingly his number came up time

after time. He tried the gaming tables and his luck was nothing short of remarkable: He won game after game. Indeed

his winnings were causing quite a stir, attracting much excitement from the attentive staff, and from the beautiful women.

This continued day after day, week after week, with the gambler winning every game, accumulating bigger and

bigger earnings. Everything was going his way. He just kept on winning. And week after week, month after month,

the gamblerʹs streak of success remained unbreakable.

After a while, this started to get tedious. The gambler was getting restless; the winning was starting to lose its meaning. Yet nothing changed. He just kept on winning every game, until one day, the now anguished gambler turned to the angel who seemed to be in charge and said that he couldnʹt take it anymore. Heaven was not for him

after all. He had figured he was destined for the ʺother placeʺ nonetheless, and indeed that is where he wanted to be.

ʺBut this is the other place,ʺ came the reply.

That is my recollection of an episode of The Twilight Zone that I saw as a young child. I donʹt recall the title, but I would call it ʺBe Careful What You Wish For.ʺ [1] As this engaging series was wont to do, it illustrated one of the paradoxes of human nature: We like to solve problems, but we donʹt want them all solved, not too quickly, anyway.

We are more attached to the problems than to the solutions.

Take death, for example. A great deal of our effort goes into avoiding it. We make extraordinary efforts to delay it, and indeed often consider its intrusion a tragic event. Yet we would find it hard to live without it. Death gives meaning to our lives. It gives importance and value to time. Time would become meaningless if there were too much

of it. If death were indefinitely put off, the human psyche would end up, well, like the gambler in The Twilight Zone episode.

We do not yet have this predicament. We have no shortage today of either death or human problems. Few observers feel that the twentieth century has left us with too much of a good thing. There is growing prosperity, fueled not incidentally by information technology, but the human species is still challenged by issues and difficulties not altogether different than those with which it has struggled from the beginning of its recorded history.

The twenty‐first century will be different. The human species, along with the computational technology it created,

will be able to solve age‐old problems of need, if not desire, and will be in a position to change the nature of mortality in a post‐biological future. Do we have the psychological capacity for all the good things that await us? Probably not.

That, however, might change as well.

Before the next century is over, human beings will no longer be the most intelligent or capable type of entity on

the planet. Actually, let me take that back. The truth of that last statement depends on how we define human. And

here we see one profound difference between these two centuries: The primary political and philosophical issue of the next century will be the definition of who we are. [2]

But I am getting ahead of myself. This last century has seen enormous technological change and the social upheavals that go along with it, which few pundits circa 1899 foresaw. The pace of change is accelerating and has been since the inception of invention (as I will discuss in the first chapter, this acceleration is an inherent feature of technology). The result will be far greater transformations in the first two decades of the twenty‐first century than we saw in the entire twentieth century. However, to appreciate the inexorable logic of where the twenty‐first century will bring us, we have to go back and start with the present.

TRANSITION TO THE TWENTY‐FIRST CENTURY

Computers today exceed human intelligence in a broad variety of intelligent yet narrow domains such as playing

chess, diagnosing certain medical conditions, buying and selling stocks, and guiding cruise missiles. Yet human intelligence overall remains far more supple and flexible. Computers are still unable to describe the objects on a crowded kitchen table, write a summary of a movie, tie a pair of shoelaces, tell the difference between a dog and a cat (although this feat, I believe, is becoming feasible today with contemporary neural nets—computer simulations of human neurons), [3] recognize humor, or perform other subtle tasks in which their human creators excel.

One reason for this disparity in capabilities is that our most advanced computers are still simpler than the human

brain currently about a million times simpler (give or take one or two orders of magnitude depending on the assumptions used). But this disparity will not remain the case as we go through the early part of the next century.

Computers doubled in speed every three years at the beginning of the twentieth century, every two years in the 1950s and 1960s, and are now doubling in speed every twelve months. This trend will continue, with computers achieving

the memory capacity and computing speed of the human brain by around the year 2020.

Achieving the basic complexity and capacity of the human brain will not automatically result in computers matching the flexibility of human intelligence. The organization and content of these resources—the software of intelligence—is equally important. One approach to emulating the brainʹs software is through reverse engineering—

scanning a human brain (which will be achievable early in the next century) [4] and essentially copying its neural circuitry in a neural computer (a computer designed to simulate a massive number of human neurons) of sufficient

capacity.

There is a plethora of credible scenarios for achieving human‐level intelligence in a machine. We will be able to evolve and train a system combining massively parallel neural nets with other paradigms to understand language and model knowledge, including the ability to read and understand written documents. Although the ability of todayʹs computers to extract and learn knowledge from natural‐language documents is quite limited, their abilities in this domain are improving rapidly. Computers will be able to read on their own, understanding and modeling what

they have read, by the second decade of the twenty‐first century. We can then have our computers read all of the worldʹs literature books, magazines, scientific journals, and other available material. Ultimately, the machines will gather knowledge on their own by venturing into the physical world, drawing from the full spectrum of media and

information services, and sharing knowledge with each other (which machines can do far more easily than their human creators).

Once a computer achieves a human level of intelligence, it will necessarily roar past it. Since their inception, computers have significantly exceeded human mental dexterity in their ability to remember and process information.

A computer can remember billions or even trillions of facts perfectly while we are hard pressed to remember a handful of phone numbers. A computer can quickly search a database with billions of records in fractions of a second.

Computers can readily share their knowledge bases. The combination of human‐level intelligence in a machine with a

computerʹs inherent superiority in the speed, accuracy, and sharing ability of its memory will be formidable.

Mammalian neurons are marvelous creations, but we wouldnʹt build them the same way. Much of their

complexity is devoted to supporting their own life processes, not to their information‐handling abilities. Furthermore, neurons are extremely slow; electronic circuits are at least a million times faster. Once a computer achieves a human level of ability in understanding abstract concepts, recognizing patterns, and other attributes of human intelligence, it will be able to apply this ability to a knowledge base of all human‐acquired‐and machine‐acquired‐knowledge.

A common reaction to the proposition that computers will seriously compete with human intelligence is to dismiss this specter based primarily on an examination of contemporary capability. After all, when I interact with my personal computer, its intelligence seems limited and brittle, if it appears intelligent at all. It is hard to imagine oneʹs personal computer having a sense of humor, holding an opinion, or displaying any of the other endearing qualities of human thought.

But the state of the art in computer technology is anything but static. Computer capabilities are emerging today

that were considered impossible one or two decades ago. Examples include the ability to transcribe accurately normal continuous human speech, to understand and respond intelligently to natural language, to recognize patterns in medical procedures such as electrocardiograms and blood tests with an accuracy rivaling that of human physicians,

and, of course, to play chess at a world‐championship level. In the next decade, we will see translating telephones that provide real‐time speech translation from one human language to another, intelligent computerized personal assistants that can converse and rapidly search and understand the worldʹs knowledge bases, and a profusion of other machines with increasingly broad and flexible intelligence.

In the second decade of the next century, it will become increasingly difficult to draw any clear distinction between the capabilities of human and machine intelligence. The advantages of computer intelligence in terms of speed, accuracy, and capacity will be clear. The advantages of human intelligence, on the other hand, will become increasingly difficult to distinguish.

The skills of computer software are already better than many people realize. It is frequently my experience that when demonstrating recent advances in, say, speech or character recognition, observers are surprised at the state of the art. For example, a typical computer userʹs last experience with speech‐recognition technology may have been a

low‐end freely bundled piece of software from several years ago that recognized a limited vocabulary, required pauses between words, and did an incorrect job at that. These users are then surprised to see contemporary systems

that can recognize fully continuous speech on a 60,000‐word vocabulary, with accuracy levels comparable to a human

typist.

Also keep in mind that the progression of computer intelligence will sneak up on us. As just one example, consider Gary Kasparovʹs confidence in 1990 that a computer would never come close to defeating him. After all, he

had played the best computers, and their chess‐playing ability—compared to his—was pathetic. But computer chess

playing made steady progress, gaining forty‐five rating points each year. In 1997, a computer sailed past Kasparov, at least in chess. There has been a great deal of commentary that other human endeavors are far more difficult to emulate than chess playing. This is true. In many areas—the ability to write a book on computers, for example—

computers are still pathetic. But as computers continue to gain in capacity at an exponential rate we will have the same experience in these other areas that Kasparov had in chess. Over the next several decades, machine competence

will rival—and ultimately surpass—any particular human skill one cares to cite, including our marvelous ability to place our ideas in a broad diversity of contexts.

Evolution has been seen as a billion‐year drama that led inexorably to its grandest creation: human intelligence.

The emergence in the early twenty‐first century of a new form of intelligence on Earth that can compete with, and ultimately significantly exceed, human intelligence will be a development of greater import than any of the events that have shaped human history. It will be no less important than the creation of the intelligence that created it, and will have profound implications for all aspects of human endeavor, including the nature of work, human learning, government, warfare, the arts, and our concept of ourselves.

This specter is not yet here. But with the emergence of computers that truly rival and exceed the human brain in

complexity will come a corresponding ability of machines to understand and respond to abstractions and subtleties.

Human beings appear to be complex in part because of our competing internal goals. Values and emotions represent

goals that often conflict with each other, and are an unavoidable by‐product of the levels of abstraction that we deal with as human beings. As computers achieve a comparable—and greater—level of complexity, and as they are increasingly derived at least in part from models of human intelligence, they, too, will necessarily utilize goals with implicit values and emotions, although not necessarily the same values and emotions that humans exhibit.

A variety of philosophical issues will emerge. Are computers thinking, or are they just calculating? Conversely, are human beings thinking, or are they just calculating? The human brain presumably follows the laws of physics, so

it must be a machine, albeit a very complex one. Is there an inherent difference between human thinking and machine

thinking? To pose the question another way, once computers are as complex as the human brain, and can match the

human brain in subtlety and complexity of thought, are we to consider them conscious? This is a difficult question even to pose, and some philosophers believe it is not a meaningful question; others believe it is the only meaningful question in philosophy. This question actually goes back to Platoʹs time, but with the emergence of machines that genuinely appear to possess volition and emotion, the issue will become increasingly compelling.

For example, if a person scans his brain through a noninvasive scanning technology of the twenty‐first century (such as an advanced magnetic resonance imaging), and downloads his mind to his personal computer, is the ʺpersonʺ who emerges in the machine the same consciousness as the person who was scanned?

That ʺpersonʺ may convincingly implore you that ʺheʺ grew up in Brooklyn, went to college in Massachusetts, walked into a scanner here, and woke up in the machine there. The original person who was scanned, on the other

hand, will acknowledge that the person in the machine does indeed appear to share his history, knowledge, memory,

and personality, but is otherwise an impostor, a different person.

Even if we limit our discussion to computers that are not directly derived from a particular human brain, they will

increasingly appear to have their own personalities, evidencing reactions that we can only label as emotions and articulating their own goals and purposes. They will appear to have their own free will. They will claim to have spiritual experiences. And people—those still using carbon‐based neurons or otherwise—will believe them.

One often reads predictions of the next several decades discussing a variety of demographic, economic, and political trends that largely ignore the revolutionary impact of machines with their own opinions and agendas. Yet we need to reflect on the implications of the gradual, yet inevitable, emergence of true competition to the full range of human thought in order to comprehend the world that lies ahead.

PART ONE

PROBING THE

PAST

C H A P T E R O N E

THE LAW OF TIME AND CHAOS

A (VERY BRIEF) HISTORY OF THE UNIVERSE:

TIME SLOWING DOWN

The universe is made of stories, not of atoms.

—Muriel Rukeyser

Is the universe a great mechanism, a great computation, a great symmetry, a great accident or a great thought?

—John D. Barrow

As we start at the beginning, we will notice an unusual attribute of the nature of time, one that is critical to our passage to the twenty‐first century. Our story begins perhaps 15 billion years ago. No conscious life existed to appreciate the birth of our Universe at the time, but we appreciate it now, so retroactively it did happen. (In retrospect—from one perspective of quantum mechanics—we could say that any Universe that fails to evolve conscious life to apprehend its existence never existed in the first place.)

It was not until 10‐43 seconds (a tenth of a millionth of a trillionth of a trillionth of a trillionth of a second) after the birth of the Universe [1] that the situation had cooled off sufficiently (to 100 million trillion trillion degrees) that a distinct force—gravity—evolved.

Not much happened for another 10‐34 seconds (this is also a very tiny fraction of a second, but it is a billion times longer than 10‐43 seconds), at which point an even cooler Universe (now only a billion billion billion degrees) allowed the emergence of matter in the form of electrons and quarks. To keep things balanced, antimatter appeared as well. It was an eventful time, as new forces evolved at a rapid rate. We were now up to three: gravity, the strong force, [2]

and the electroweak force. [3]

After another 10‐10 seconds (a tenth of a billionth of a second), the electroweak force split into the electromagnetic and weak forces [4] we know so well today.

Things got complicated after another 10‐5 seconds (ten millionths of a second). With the temperature now down to

a relatively balmy trillion degrees, the quarks came together to form protons and neutrons. The antiquarks did the same, forming antiprotons.

Somehow, the matter particles achieved a slight edge. How this happened is not entirely clear. Up until then, everything had seemed so, well, even. But had everything stayed evenly balanced, it would have been a rather boring

Universe. For one thing, life never would have evolved, and thus we could conclude that the Universe would never

have existed in the first place.

For every 10 billion antiprotons, the Universe contained 10 billion and 1 protons. The protons and antiprotons collided, causing the emergence of another important phenomenon: light (photons). Thus, almost all of the antimatter was destroyed, leaving matter as dominant. (This shows you the danger of allowing a competitor to achieve even a

slight advantage.)

Of course, had antimatter won, its descendants would have called it matter and would have called matter antimatter, so we would be back where we started (perhaps that is what happened).

After another second (a second is a very long time compared to some of the earlier chapters in the Universeʹs history, so notice how the time frames are growing exponentially larger), the electrons and antielectrons (called positrons) followed the lead of the protons and antiprotons and similarly annihilated each other, leaving mostly the electrons.

After another minute, the neutrons and protons began coalescing into heavier nuclei, such as helium, lithium, and

heavy forms of hydrogen. The temperature was now only a billion degrees.

About 300,000 years later (things are slowing down now rather quickly), with the average temperature now only

3,000 degrees, the first atoms were created as the nuclei took control of nearby electrons.

After a billion years, these atoms formed large clouds that gradually swirled into galaxies.

After another two billion years, the matter within the galaxies coalesced further into distinct stars, many with their own solar systems.

Three billion years later, circling an unexceptional star on the arm of a common galaxy, an unremarkable planet

we call the Earth was born.

Now before we go any further, letʹs notice a striking feature of the passage of time. Events moved quickly at the

beginning of the Universeʹs history. We had three paradigm shifts in just the first billionth of a second. Later on, events of cosmological significance took billions of years. The nature of time is that it inherently moves in an exponential fashion either geometrically gaining in speed, or, as in the history of our Universe, geometrically slowing down. Time only seems to be linear during those eons in which not much happens. Thus most of the time, the linear

passage of time is a reasonable approximation of its passage. But thatʹs not the inherent nature of time.

Why is this significant? Itʹs not when youʹre stuck in the eons in which not much happens. But it is of great significance when you find yourself in the ʺknee of the curve,ʺ those periods in which the exponential nature of the curve of time explodes either inwardly or outwardly. Itʹs like falling into a black hole (in that case, time accelerates exponentially faster as one falls in).

The Speed of Time

But wait a second, how can we say that time is changing its ʺspeedʺ? We can talk about the rate of a process, in terms of its progress per second, but can we say that time is changing its rate? Can time start moving at, say, two seconds per second?

Einstein said exactly this—time is relative to the entities experiencing it. [5] One manʹs second can be another womanʹs forty years. Einstein gives the example of a man who travels at very close to the speed of light to a star—say, twenty light‐years away. From our Earth‐bound perspective, the trip takes slightly more than twenty years in each direction. When the man gets back, his wife has aged forty years. For him, however, the trip was rather brief. If he travels at close enough to the speed of light, it may have only taken a second or less (from a practical perspective we would have to consider some limitations, such as the time to accelerate and decelerate without crushing his body).

Whose time frame is the correct one?

Einstein says they are both correct, and exist only relative to each other.

Certain species of birds have a life span of only several years. If you observe their rapid movements, it appears that they are experiencing the passage of time on a different scale. We experience this in our own lives. A young childʹs rate of change and experience of time is different from that of an adult. Of particular note, we will see that the acceleration in the passage of time for evolution is moving in a different direction than that for the Universe from which it emerges.

It is in the nature of exponential growth that events develop extremely slowly for extremely long periods of time,

but as one glides through the knee of the curve, events erupt at an increasingly furious pace. And that is what we will experience as we enter the twenty‐first century.

EVOLUTION: TIME SPEEDING UP

In the beginning was the word. . . . And the word became flesh.

—John 1:1,14

A great deal of the universe does not need any explanation. Elephants, for instance. Once molecules have learnt to compete and create other molecules in their own image, elephants, and things resembling elephants, will in due course be found roaming through the countryside.

—Peter Atkins

The further backward you look, the further forward you can see.

—Winston Churchill

Weʹll come back to the knee of the curve, but letʹs delve further into the exponential nature of time. In the nineteenth century, a set of unifying principles called the laws of thermodynamics [6] was postulated. As the name implies, they deal with the dynamic nature of heat and were the first major refinement of the laws of classical mechanics perfected by Isaac Newton a century earlier. Whereas Newton had described a world of clockwork perfection in which particles

and objects of all sizes followed highly disciplined, predictable patterns, the laws of thermodynamics describe a world of chaos. Indeed, that is what heat is. Heat is the chaotic—unpredictable—movement of the particles that make

up the world. A corollary of the second law of thermodynamics is that in a closed system (interacting entities and forces not subject to outside influence; for example, the Universe), disorder (called ʺentropyʺ) increases. Thus, left to its own devices, a system such as the world we live in becomes increasingly chaotic. Many people find this describes their lives rather well. But in the nineteenth century, the laws of thermodynamics were considered a disturbing discovery. At the beginning of that century, it appeared that the basic principles governing the world were both understood and orderly There were a few details left to be filled in, but the basic picture was under control.

Thermodynamics was the first contradiction to this complacent picture. It would not be the last.

The second law of thermodynamics, sometimes called the Law of Increasing Entropy, would seem to imply that

the natural emergence of intelligence is impossible. Intelligent behavior is the opposite of random behavior, and any system capable of intelligent responses to its environment needs to be highly ordered. The chemistry of life, particularly of intelligent life, is comprised of exceptionally intricate designs. Out of the increasingly chaotic swirl of particles and energy in the world, extraordinary designs somehow emerged. How do we reconcile the emergence of

intelligent life with the Law of increasing Entropy?

There are two answers here. First, while the Law of Increasing Entropy would appear to contradict the thrust of

evolution, which is toward increasingly elaborate order, the two phenomena are not inherently contradictory. The order of life takes place amid great chaos, and the existence of life‐forms does not appreciably affect the measure of entropy in the larger system in which life has evolved. An organism is not a closed system. It is part of a larger system we call the environment, which remains high in entropy In other words, the order represented by the existence of life-forms is insignificant in terms of measuring overall entropy.

Thus, while chaos increases in the Universe, it is possible for evolutionary processes that create increasingly intricate, ordered patterns to exist simultaneously. [7] Evolution is a process, but it is not a closed system. It is subject to outside influence, and indeed draws upon the chaos in which it is embedded. So the Law of Increasing Entropy does not rule out the emergence of life and intelligence.

For the second answer, we need to take a closer look at evolution, as it was the original creator of intelligence.

The Exponentially Quickening Pace of Evolution

As you will recall, after billions of years, the unremarkable planet called Earth was formed. Churned by the energy of the sun, the elements formed more and more complex molecules. From physics, chemistry was born.

Two billion years later, life began. That is to say, patterns of matter and energy that could perpetuate themselves

and survive perpetuated themselves and survived. That this apparent tautology went unnoticed until a couple of centuries ago is itself remarkable.

Over time, the patterns became more complicated than mere chains of molecules. Structures of molecules performing distinct functions organized themselves into little societies of molecules. From chemistry, biology was born.

Thus, about 3.4 billion years ago, the first earthly organisms emerged: anaerobic (not requiring oxygen) prokaryotes (single‐celled creatures) with a rudimentary method for perpetuating their own designs. Early innovations that followed included a simple genetic system, the ability to swim, and photosynthesis, which set the stage for more advanced, oxygen‐consuming organisms. The most important development for the next couple of billion years was the DNA‐based genetics that would henceforth guide and record evolutionary development.

A key requirement for an evolutionary process is a ʺwrittenʺ

record of achievement, for otherwise the process would be doomed to repeat finding solutions to problems already solved. For the earliest organisms, the record was written (embodied) in their bodies, coded directly into the chemistry of their primitive cellular structures. With the invention of DNA‐based genetics, evolution had designed a digital computer to record its handiwork. This design permitted more complex experiments. The aggregations of molecules called cells organized themselves into societies of cells with the appearance of the first multicellular plants and animals about 700 million years ago. For the next 130 million years, the basic body plans of modern animals were designed, including a spinal cord‐based skeleton that provided early fish with an efficient swimming style.

So while evolution took billions of years to design the first primitive cells, salient events then began occurring in hundreds of millions of years, a distinct quickening of the pace. [8] When some calamity finished off the dinosaurs 65

million years ago, mammals inherited the Earth (although the insects might disagree). [9] With the emergence of the

primates, progress was then measured in mere tens of millions of years. [10] Humanoids emerged 15 million years ago, distinguished by walking on their hind legs, and now weʹre down to millions of years. [11]

With larger brains, particularly in the area of the highly convoluted cortex responsible for rational thought, our own species, Homo sapiens, emerged perhaps 500,000 years ago. Homo sapiens are not very different from other advanced primates in terms of their genetic heritage. Their DNA is 98.6 percent the same as the lowland gorilla, and 97.8 percent the same as the orangutans. [12] The story of evolution since that time now focuses in on a human-sponsored variant of evolution: technology.

TECHNOLOGY: EVOLUTION BY OTHER MEANS

When a scientist states that something is possible, he is almost certainly right. When he states that something is impossible, he is very probably wrong.

The only way of discovering the limits of the possible is to venture a little way past them into the impossible.

Any sufficiently advanced technology is indistinguishable from magic.

—Arthur C. Clarkeʹs three laws of technology

A machine is as distinctively and brilliantly and expressively human as a violin sonata or a theorem in Euclid.

—Gregory Vlastos

Technology picks right up with the exponentially quickening pace of evolution. Although not the only tool‐using animal, Homo sapiens are distinguished by their creation of technology. [13] Technology goes beyond the mere fashioning and use of tools. It involves a record of tool making and a progression in the sophistication of tools. it requires invention and is itself a continuation of evolution by other means. The ʺgenetic codeʺ of the evolutionary process of technology is the record maintained by the tool‐making species. Just as the genetic code of the early life-forms was simply the chemical composition of the organisms themselves, the written record of early tools consisted of the tools themselves. Later on, the genesʺ of technological evolution evolved into records using written language and are now often stored in computer databases. Ultimately, the technology itself will create new technology. But we are getting ahead of ourselves.

Our story is now marked in tens of thousands of years. There were multiple subspecies of Homo sapiens. Homo sapiens neanderthalensis emerged about 100,000 years ago in Europe and the Middle East and then disappeared mysteriously about 35,000 to 40,000 years ago. Despite their brutish image, Neanderthals cultivated an involved culture that included elaborate funeral rituals—burying their dead with ornaments, including flowers. Weʹre not entirely sure what happened to our Homo sapiens cousins, but they apparently got into conflict with our own immediate ancestors Homo sapiens sapiens, who emerged about 90,000 years ago. Several species and subspecies of humanoids initiated the creation of technology. The most clever and aggressive of these subspecies was the only one

to survive. This established a pattern that would repeat itself throughout human history, in that the technologically more advanced group ends up becoming dominant. This trend may not bode well as intelligent machines themselves

surpass us in intelligence and technological sophistication in the twenty‐first century.

Our Homo sapiens sapiens subspecies was thus left alone among humanoids about 40,000 years ago.

Our forebears had already inherited from earlier hominid species and subspecies such innovations as the recording of events on cave walls, pictorial art, music, dance, religion, advanced language, fire, and weapons. For tens of thousands of years, humans had created tools by sharpening one side of a stone. It took our species tens of thousands of years to figure out that by sharpening both sides, the resultant sharp edge provided a far more useful

tool. One significant point, however, is that these innovations did occur, and they endured. No other tool‐using animal on Earth has demonstrated the ability to create and retain innovations in their use of tools.

The other significant point is that technology, like the evolution of life‐forms; that spawned it, is inherently an accelerating process. The foundations of technology—such as creating a sharp edge from a stone—took eons to perfect, although for human‐created technology, eons means thousands of years rather than the billions of years that the evolution of life‐forms required to get started.

Like the evolution of life‐forms, the pace of technology has greatly accelerated over time. [14] The progress of technology in the nineteenth century, for example, greatly exceeded that of earlier centuries, with the building of canals and great ships, the advent of paved roads, the spread of the railroad, the development of the telegraph, and the invention of photography, the bicycle, sewing machine, typewriter, telephone, phonograph, motion picture, automobile, and of course Thomas Edisonʹs light bulb. The continued exponential growth of technology in the first two decades of the twentieth century matched that of the entire nineteenth century. Today, we have major transformations in just a few yearsʹ time. As one of many examples, the latest revolution in communications—the World Wide Web—didnʹt exist just a few years ago.

WHAT IS TECHNOLOGY?

As technology is the continuation of evolution by other means, it shares the phenomenon of an exponentially

quickening pace. The word is derived from the Greek tekhnē, which means "craft" or "art," and logia, which means "the study of." Thus one interpretation of technology is the study of crafting, in which crafting refers to the shaping of resources for a practical purpose. I use the term resources rather than materials because

technology extends to the shaping of nonmaterial resources such as information.

Technology is often defined as the creation of tools to gain control over the environment, However, this

definition is not entirely sufficient. Humans are not alone in their use or even creation of tools. Orangutans in

Sumatra's Suaq Balimbing swamp make tools out of long sticks to break open termite nests. Crows fashion

tools from sticks and leaves. The leaf-cutter ant mixes dry leaves with its saliva to create a paste. Crocodiles

use tree roots to anchor dead prey. [15]

What is uniquely human is the application of knowledge—recorded knowledge—to the fashioning of tools.

The knowledge base represents the genetic code for the evolving technology. And as technology has evolved,

the means for recording this knowledge base has also evolved, from the oral traditions of antiquity to the

written design logs of nineteenth-century craftsmen to the computer-assisted design databases of the 1990s.

Technology also implies a transcendence of the materials used to comprise it. When the elements of an

invention are assembled in just the right way, they produce an enchanting effect that goes beyond the mere

parts. When Alexander Graham Bell accidentally wire-connected two moving drums and solenoids (metal cores

wrapped in wire) in 1875, the result transcended the materials he was working with. For the first time, a

human voice was transported, magically it seemed, to a remote location. Most assemblages are just that:

random assemblies. But when materials—and in the case of modern technology, information—are assembled

in just the right way, transcendence occurs. The assembled object becomes far greater than the sum of its

parts.

The same phenomenon of transcendence occurs in art, which may properly be regarded as another form of

human technology. When wood, varnishes, and strings are assembled in just the right way, the result is

wondrous: a violin, a piano. When such a device is manipulated in just the right way, there is magic of

another sort: music. Music goes beyond mere sound. It evokes a response—cognitive, emotional, perhaps

spiritual—in the listener, another form of transcendence. All of the arts share the same goal: communicating

from artist to audience. The communication is not of unadorned data, but of the more important items in the

phenomenological garden: feelings, ideas, experiences, longings. The Greek meaning of tekhnē logia includes

art as a key manifestation of technology.

Language is another form of human-created technology, One of the primary applications of technology is

communication, and language provides the foundation for Homo sapiens communication. Communication is a

critical survival skill. It enabled human families and tribes to develop cooperative strategies to overcome

obstacles and adversaries. Other animals communicate. Monkeys and apes use elaborate gestures and grunts

to communicate a variety of messages. Bees perform intricate dances in a figure-eight pattern to

communicate where caches of nectar may be found. Female tree frogs in Malaysia do tap dances to signal

their availability. Crabs wave their claws in one way to warn adversaries but use a different rhythm for

courtship. [16] But these methods do not appear to evolve, other than through the usual DNA-based

evolution. These species lack a way to record their means of communication, so the methods remain static

from one generation to the next. In contrast, human language does evolve, as do all forms of technology.

Along with the evolving forms of language itself, technology has provided ever-improving means for recording

and distributing human language.

Homo sapiens are unique in their use and fostering of all forms of what I regard as technology: art,

language, and machines, all representing evolution by other means. In the 1960s through 1990s, several well-

publicized primates were said to have mastered at least childlike language skills. Chimpanzees Lana and Kanzi

pressed sequences of buttons with symbols on them. Gorillas Washoe and Koko were said to be using

American Sign Language. Many linguists are skeptical, noting that many primate "sentences" were jumbles,

such as "Nim eat, Nim eat, drink eat me Nim, me gum me gum, tickle me, Nim play, you me banana me

banana you." Even if we view this phenomenon more generously, it would be the exception that proves the

rule. These primates did not evolve the languages they are credited with using, they do not appear to develop

these skills spontaneously, and their these skills is very limited. [17] They are at best participating

peripherally in what is still a uniquely human invention communicating using the recursive (self-referencing),

symbolic, evolving means called language.

The Inevitability of Technology

Once life takes hold on a planet, we can consider the emergence of technology as inevitable. The ability to expand the reach of oneʹs physical capabilities, not to mention mental facilities, through technology is clearly useful for survival.

Technology has enabled our subspecies to dominate its ecological niche. Technology requires two attributes of its creator: intelligence and the physical ability to manipulate the environment. Weʹll talk more in chapter 4, ʺA New Form of Intelligence on Earth,ʺ about the nature of intelligence, but it clearly represents an ability to use limited resources optimally, including time. This ability is inherently useful for survival, so it is favored. The ability to manipulate the environment is also useful; otherwise an organism is at the mercy of its environment for safety, food, and the satisfaction of its other needs. Sooner or later, an organism is bound to emerge with both attributes.

THE INEVITABILITY OF COMPUTATION

It is not a bad definition of man to describe him as a tool‐making animal. His earliest contrivances to support uncivilized life were tools of the simplest and rudest construction. His latest achievements in the substitution of machinery, not merely for the skill of the human hand, but for the relief of the human intellect, are founded on the use of tools of a still higher order.

—Charles Babbage

All of the fundamental processes we have examined—the development of the Universe, the evolution of life‐forms, the subsequent evolution of technology—have all progressed in an exponential fashion, some slowing down, some speeding up. What is the common thread here? Why did cosmology exponentially slow down while evolution accelerated? The answers are surprising, and fundamental to understanding the twenty‐first century.

But before I attempt to answer these questions, letʹs examine one other very relevant example of acceleration: the

exponential growth of computation.

Early in the evolution of life‐forms, specialized organs developed the ability to maintain internal states and respond differentially to external stimuli. The trend ever since has been toward more complex and capable nervous

systems with the ability to store extensive memories; recognize patterns in visual, auditory, and tactile stimuli; and engage in increasingly sophisticated levels of reasoning. The ability to remember and to solve problems—

computation—has constituted the cutting edge in the evolution of multicellular organisms.

The same value of computation holds true in the evolution of human‐created technology. Products are more useful if they can maintain internal states and respond differentially to varying conditions and situations. As machines moved beyond mere implements to extend human reach and strength, they also began to accumulate the ability to remember and perform logical manipulations. The simple cams, gears, and levers of the Middle Ages were

assembled into the elaborate automata of the European Renaissance. Mechanical calculators, which first emerged in

the seventeenth century, became increasingly complex, culminating in the first automated U.S. census in 1890.

Computers played a crucial role in at least one theater of the Second World War, and have developed in an accelerating spiral ever since.

THE LIFE CYCLE OF A TECHNOLOGY

Technologies fight for survival, evolve, and undergo their own characteristic life cycle. We can identify seven

distinct stages. During the precursor stage, the prerequisites of a technology exist, and dreamers may

contemplate these elements coming together. We do not, however, regard dreaming to be the same as

inventing, even if the dreams are written down. Leonardo da Vinci drew convincing pictures of airplanes and

automobiles, but he is not considered to have invented either.

The next stage, one highly celebrated in our culture, is invention, a very brief stage, not dissimilar in some respects to the process of birth after an extended period of labor. Here the inventor blends curiosity, scientific

skills, determination, and usually a measure of showmanship to combine methods in a new way to bring a

new technology to life.

The next stage is development, during which the invention is protected and supported by doting guardians

(which may include the original inventor). Often this stage is more crucial than invention and may involve

additional creation that can have greater significance than the original invention. Many tinkerers had

constructed finely hand-tuned horseless carriages, but it was Henry Ford's innovation of mass production that

enabled the automobile to take root and flourish.

The fourth stage is maturity. Although continuing to evolve, the technology now has a life of its own and

has become an independent and established part of the community. It may become so interwoven in the

fabric of life that it appears to many observers that it will last forever. This creates an interesting drama when

the next stage arrives, which I call the stage of the pretenders. Here an upstart threatens to eclipse the older technology. Its enthusiasts prematurely predict victory. While providing some distinct benefits, the newer

technology is found on reflection to be missing some key element of functionality or quality. When it indeed

fails to dislodge the established order, the technology conservatives take this as evidence that the original

approach will indeed live forever.

This is usually a short-lived victory for the aging technology. Shortly thereafter, another new technology

typically does succeed in rendering the original technology into the stage of obsolescence. In this part of the

life cycle, the technology lives out its senior years in gradual decline, its original purpose and functionality

now subsumed by a more spry competitor. This stage, which may comprise 5 to 10 percent of the life cycle,

finally yields to antiquity (examples today: the horse and buggy, the harpsichord, the manual typewriter, and the electromechanical calculator).

To illustrate this, consider the phonograph record. In the mid-nineteenth century, there were several

precursors, including Édouard-Léon Scott de Martinville's phonautograph, a device that recorded sound

vibrations as a printed pattern. It was Thomas Edison, however, who in 1877 brought all of the elements

together and invented the first device that could record and reproduce sound. Further refinements were

necessary for the phonograph to become commercially viable. It became a fully mature technology in 1948

when Columbia introduced the 33 revolutions-per-minute (rpm) long-playing record (LP) and RCA Victor

introduced the 45-rpm small disc. The pretender was the cassette tape, introduced in the 1960s and

popularized during the 1970s. Early enthusiasts predicted that its small size and ability to be re-recorded

would make the relatively bulky and scratchable record obsolete.

Despite these obvious benefits, cassettes lack random access (the ability to play selections in a desired

order) and are prone to their own forms of distortion and lack of fidelity. In the late 1980s and early 1990s,

the digital compact disc (CD) did deliver the mortal blow. With the CD providing both random access and a

level of quality close to the limits of the human auditory system, the phonograph record entered the stage of

obsolescence in the first half of the 1990s. Although still produced in small quantities, the technology that

Edison gave birth to more than a century ago is now approaching antiquity.

Another example is the print book, a rather mature technology today. It is now in the stage of the

pretenders, with the software-based "virtual" book as the pretender. Lacking the resolution, contrast, lack of flicker, and other visual qualities of paper and ink, the current generation of virtual book does not have the

capability of displacing paper-based publications. Yet this victory of the paper-based book will be short-lived

as future generations of computer displays succeed in providing a fully satisfactory alternative to paper.

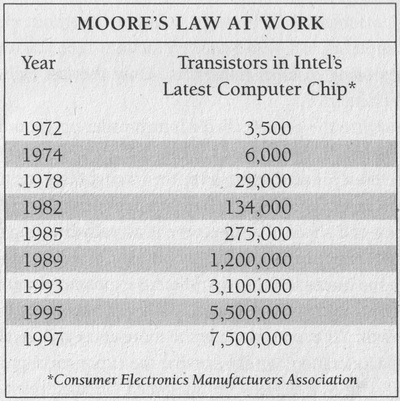

The Emergence of Mooreʹs Law

Gordon Moore, an inventor of the integrated circuit and then chairman of Intel, noted in 1965 that the surface area of a transistor (as etched on an integrated circuit) was being reduced by approximately 50 percent every twelve months.

In 1975, he was widely reported to have revised this observation to eighteen months. Moore claims that his 1975

update was to twenty‐four months, and that does appear to be a better fit to the data.

The result is that every two years, you can pack twice as many transistors on an integrated circuit. This doubles

both the number of components on a chip as well as its speed. Since the cost of an integrated circuit is fairly constant, the implication is that every two years you can get twice as much circuitry running at twice the speed for the same

price. For many applications, thatʹs an effective quadrupling of the value. The observation holds true for every type of circuit, from memory chips to computer processors.

This insightful observation has become known as Mooreʹs Law on Integrated Circuits, and the remarkable phenomenon of the law has been driving the acceleration of computing for the past forty years. But how much longer

can this go on? The chip companies have expressed confidence in another fifteen to twenty years of Mooreʹs Law by

continuing their practice of using increasingly higher resolutions of optical lithography (an electronic process similar to photographic printing) to reduce the feature size—measured today in millionths of a meter—of transistors and other key components. [18] But then—after almost sixty years—this paradigm will break down. The transistor insulators will then be just a few atoms thick, and the conventional approach of shrinking them wonʹt work.

What then?

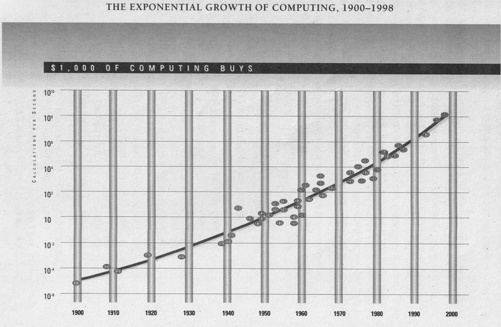

We first note that the exponential growth of computing did not start with Mooreʹs Law on Integrated Circuits. In

the accompanying figure, ʺThe Exponential Growth of Computing, 1900–1998,ʺ [19] I plotted forty‐nine notable computing machines spanning the twentieth century on an exponential chart, in which the vertical axis represents powers of ten in computer speed per unit cost (as measured in the number of ʺcalculations per secondʺ that can be purchased for $1,000). Each point on the graph represents one of the machines. The first five machines used mechanical technology, followed by three electromechanical (relay based) computers, followed by eleven vacuum-tube machines, followed by twelve machines using discrete transistors. Only the last eighteen computers used integrated circuits.

I then fit a curve to the points called a fourth‐order polynomial, which allows for up to four bends. In other words, I did not try to fit a straight line to the points, just the closest fourth‐order curve. Yet a straight line is close to what I got. A straight line on an exponential graph means exponential growth. A careful examination of the trend shows that the curve is actually bending slightly upward, indicating a small exponential growth in the rate of exponential growth. This may result from the interaction of two different exponential trends, as I will discuss in chapter 6, ʺBuilding New Brains.ʺ Or there may indeed be two levels of exponential growth. Yet even if we take the more conservative view that there is just one level of acceleration, we can see that the exponential growth of computing did not start with Mooreʹs Law on Integrated Circuits, but dates back to the advent of electrical computing at the beginning of the twentieth century.

Mechanical Computing Devices

1. 1900

Analytical Engine

2. 1908

Hollerith Tabulator

3. 1911

Monroe Calculator

4. 1919

IBM Tabulator

5. 1928

National Ellis 3000

Electromechanical (Relay Based) Computers

6. 1939

Zuse 2

7. 1940

Bell Calculator Model 1

8. 1941

Zuse 3

Vacuum‐Tube Computers

9. 1943

Colossus

10. 1946

ENIAC

11. 1948

IBM SSEC

12. 1949

BINAC

13. 1949

EDSAC

14. 1951

Univac 1

15. 1953

Univac 1103

16. 1953

IBM 701

17. 1954

EDVAC

18. 1955

Whirlwind

19. 1955

IBM 704

Discrete Transistor Computers

20. 1958

Datamatic 1000

21. 1958

Univac II

22. 1959

Mobidic

23. 1959

IBM 7090

24. 1960

IBM 1620

25. 1960

DEC PDP‐1

26. 1961

DEC PDP‐4

27. 1962

Univac III

28. 1964

CDC 6600

29. 1965

IBM 1130

30. 1965

DEC PDP‐8

31. 1966

IBM 360 Model 75

Integrated Circuit Computers

32. 1968

DEC PDP‐10

33. 1973

Intellec‐8

34. 1973

Data General Nova

35. 1975

Altair 8800

36. 1976

DEC PDP‐11 Model 70

37. 1977

Cray I

38. 1977

Apple II

39. 1979

DEC VAX 11Model 780

40. 1980

Sun‐1

41. 1982

IBM PC

42. 1982

Compaq Portable

43. 1983

IBM AT‐80286

44. 1984

Apple Macintosh

45. 1986

Compaq Deskpro 386

46. 1987

Apple Mac II

47. 1993

Pentium PC

48. 1996

Pentium PC

49. 1998

Pentium II PC

In the 1980s, a number of observers, including Carnegie Mellon University professor Hans Moravec, Nippon Electric Companyʹs David Waltz, and myself, noticed that computers have been growing exponentially in power, long

before the invention. of the integrated circuit in 1958 or even the transistor in 1947. [20] The speed and density of computation have been doubling every three years (at the beginning of the twentieth century) to one year (at the end of the twentieth century), regardless of the type of hardware used. Remarkably, this ʺExponential Law of Computingʺ

has held true for at least a century, from the mechanical card‐based electrical computing technology used in the 1890

U.S. census, to the relay‐based computers that cracked the Nazi Enigma code, to the vacuum‐tube‐based computers of

the 1950s, to the transistor‐based machines of the 1960s, and to all of the generations of integrated circuits of the past four decades. Computers are about one hundred million times more powerful for the same unit cost than they were a

half century ago. If the automobile industry had made as much progress in the past fifty years, a car today would cost a hundredth of a cent and go faster than the speed of light.

As with any phenomenon of exponential growth, the increases are so slow at first as to be virtually unnoticeable.

Despite many decades of progress since the first electrical calculating equipment was used in the 1890 census, it was not until the mid1960s that this phenomenon was even noticed (although Alan Turing had an inkling of it in 1950).

Even then, it was appreciated only by a small community of computer engineers and scientists. Today, you have only

to scan the personal computer ads—or the toy ads—in your local newspaper to see the dramatic improvements in the

price performance of computation that now arrive on a monthly basis.

So Mooreʹs Law on Integrated Circuits was not the first, but the fifth paradigm to continue the now one‐century‐

long exponential growth of computing. Each new paradigm came along just when needed. This suggests that exponential growth wonʹt stop with the end of Mooreʹs Law. But the answer to our question on the continuation of

the exponential growth of computing is critical to our understanding of the twenty‐first century. So to gain a deeper understanding of the true nature of this trend, we need to go back to our earlier questions on the exponential nature of time.

THE LAW OF TIME AND CHAOS

In a process, the time interval between salient events (i.e., events that change the nature of the process,

or significantly affect the future of the process) expands or contracts along with the amount of chaos

THE LAW OF INCREASING CHAOS

THE LAW OF ACCELERATING RETURNS

As chaos exponentially increases, time exponentially

As order exponentially increases, time exponentially

slows down (i.e., the time interval between salient

speeds up (i.e., the time interval between salient

events grows longer as time passes).

events grows shorter as time passes).

THE LAW OF ACCELERATING RETURNS AS

THE LAW OF

THE LAW OF

APPLIED TO AN EVOLUTIONARY PROCESS

INCREASING CHAOS

INCREASING CHAOS

An evolutionary process is not a closed system;

AS APPLIED TO THE

AS APPLIED TO THE

therefore, evolution draws upon the chaos in the

UNIVERSE

LIFE OF AN

larger system in which it takes place for its options

The Universe started as

ORGANISM

for diversity; and

a "singularity," a single

The development of an

• Evolution builds on its own increasing order.

undifferentiated point

organism from

Therefore:

with no size and no

conception as a single

chaos, so early epochal

cell through maturation

• In an evolutionary process, order increases

events were extremely

is a process moving

exponentially.

rapid. The Univers grew

toward greater diversity

Therefore:

greatly in chaos as time

and thus greater

• Time exponentially speeds up.

went on. Thus time

disorder. Thus the time

Therefore:

slowed down (i.e., the

interval between salient

•

The returns (i.e., the valuable products of the

time interval between

events grows longer

process) accelerate.

salient events grew

over time.

exponentially longer

over time).

THE LAW OF ACCELERATING RETURNS AS

APPLIED TO THE EVOLUTION OF LIFE-FORMS

The time interval between salient events (e.g., a

significant new branch) grows exponentially

shorter as time passes.

THE EVOLUTION OF LIFE-FORMS LEADS TO

THE EVOLUTION OF TECHNOLOGY

The advance of technology is inherently an

evolutionary process. Indeed, it is a continuation

of the same evolutionary process that gave rise to

technology-creating species. Therefore, in

accordance with the Law of Accelerating Returns,

the time interval between salient advances grows

exponentially shorter as time passes. The

"returns" (i.e., the value) of technology increase

over time.

TECHNOLOGY BEGETS COMPUTATION

Computation if the essence of order in

technology. In accordance with the Law of

Accelerating Returns, the value—power—of

computation increases exponentially over time.

MOORE'S LAW ON INTEGRATED CIRCUITS

Transistor die sizes are cut in half every twenty-

four months, therefore both computing capacity

(i.e., the number of transistors on a chip) and the

speed of each transistor double every twenty-four

months. This is the fifth paradigm since the

inception of computation—after mechanical,

electromechanical (i.e., relay based), vacuum

tube, and discrete transistor technology—to

provide accelerating returns to computation.

THE LAW OF TIME AND CHAOS

Is the flow of time something real, or might our sense of time passing be just an illusion that hides the fact that what is real is only a vast collection of moments?

—Lee Smolin

Time is natureʹs way of preventing everything from happening at once.

—Graffito

Things are more like they are now than they ever were before.

—Dwight Eisenhower

Consider these diverse exponential trends:

• The exponentially slowing pace that the Universe followed, with three epochs in the first billionth of a second, with later salient events taking billions of years.

• The exponentially slowing pace in the development of an organism. In the first month after conception, we

grow a body, a head, even a tail. We grow a brain in the first couple of months. After leaving our maternal