Only a catastrophe that wipes out the entire process.

SUCH AS AN ALL‐OUT NUCLEAR WAR?

Thatʹs one scenario, but in the next century, we will encounter a plethora of other ʺfailure modes.ʺ Weʹll talk about this in later chapters.

I CANʹT WAIT. NOW TELL ME THIS, WHAT DOES THE LAW OF ACCELERATING RETURNS HAVE TO DO

WITH THE TWENTY‐FIRST CENTURY?

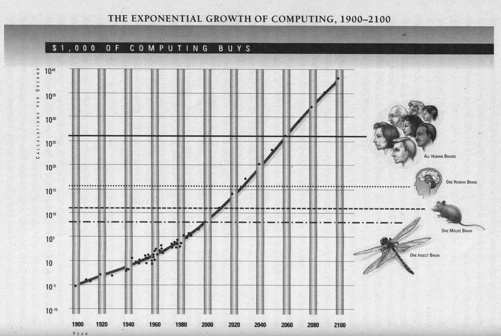

Exponential trends are immensely powerful but deceptive. They linger for eons with very little effect. But once they reach the ʺknee of the curve,ʺ they explode with unrelenting fury. With regard to computer technology and its impact on human society, that knee is approaching with the new millennium. Now I have a question for you.

SHOOT.

Just who are you anyway?

WHY, IʹM THE READER.

Of course. Well, itʹs good to have you contributing to the book while thereʹs still time to do something about it.

GLAD TO. NOW, YOU NEVER DID GIVE THE ENDING TO THE EMPEROR STORY. SO DOES THE EMPEROR

LOSE HIS EMPIRE, OR DOES THE INVENTOR LOSE HIS HEAD?

I have two endings, so I just canʹt say.

MAYBE THEY REACH A COMPROMISE SOLUTION. THE INVENTOR MIGHT BE HAPPY TO SETTLE FOR, SAY,

JUST ONE PROVINCE OF CHINA.

Yes, that would be a good result. And maybe an even better parable for the twenty‐first century.

‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐

C H A P T E R T W O

THE INTELLIGENCE OF

EVOLUTION

Hereʹs another critical question for understanding the twenty‐first century: Can an intelligence create another intelligence more intelligent than itself?

Letʹs first consider the intelligent process that created us: evolution.

Evolution is a master programmer. It has been prolific, designing millions of species of breathtaking diversity and

ingenuity. And thatʹs just here on Earth. The software programs have been all written down, recorded as digital data in the chemical structure of an ingenious molecule called deoxyribonucleic acid, or DNA. DNA was first described by

J. D. Watson and E H. C. Crick in 1953 as a double helix consisting of a twisting pair of strands of polynucleotides with two bits of information encoded at each ledge of a spiral staircase, encoded by the choice of nucleotides. [1] This master ʺread onlyʺ memory controls the vast machinery of life.

Supported by a twisting sugar‐phosphate backbone, the DNA molecule consists of between several dozen and several million rungs, each of which is coded with one nucleotide letter drawn from a four‐letter alphabet of base pairs (adenine‐thymine, thymine‐adenine, cytosine‐guanine, and guanine‐cytosine). Human DNA is a long

molecule—it would measure up to six feet in length if stretched out‐but it is packed into an elaborate coil only 1/2500 of an inch across.

The mechanism to peel off copies of the DNA code consists of other special machines: organic molecules called enzymes, which split each base pair and then assemble two identical DNA molecules by rematching the broken base

pairs. Other little chemical machines then verify the validity of the copy by checking the integrity of the base‐pair matches. The error rate of these chemical information‐processing transactions is about one error in a billion base‐pair replications. There are further redundancy and error‐correction codes built into the data itself, so meaningful mistakes are rare. Some mistakes do get through, most of which cause defects in a single cell. Mistakes in an early fetal cell may cause birth defects in the newborn organism. Once in a long while such defects offer an advantage, and this new encoding may eventually be favored through the enhanced survival of that organism and its offspring.

The DNA code controls the salient details of the construction of every cell in the organism, including the shapes

and processes of the cell, and of the organs comprised of the cells. In a process called translation, other enzymes translate the coded DNA information by building proteins. It is these proteins that define the structure, behavior, and intelligence of each cell, and of the organism. [2]

This computational machinery is at once remarkably complex and amazingly simple. Only four base pairs provide

the data storage for the complexity of all the millions of life‐forms on Earth, from primitive bacteria to human beings.

The ribosomes—little tape‐recorder molecules—read the code and build proteins from only twenty amino acids. The

synchronized flexing of muscle cells, the intricate biochemical interactions in our blood, the structure and functioning of our brains, and all of the other diverse functions of the Earthʹs creatures are programmed in this efficient code.

The genetic information‐processing appliance is an existence proof of nano‐engineering (building machines atom

by atom), because the machinery of life indeed takes place on the atomic level. Tiny bits of molecules consisting of just dozens of atoms encode each bit and perform the transcription, error detection, and correction functions. The actual building of the organic stuff is conducted atom by atom with the building of the amino acid chains.

This is our understanding of the hardware of the computational engine driving life on Earth. We are just beginning, however, to unravel the software. While prolific, evolution has been a sloppy programmer. It has left us

the object code (billions of bits of coded data), but there is no higher‐level source code (statements in a language we can understand), no explanatory comments, no ʺhelpʺ file, no documentation, and no user manual. Through the Human Genome Project, we are in the process of writing down the 6‐billion‐bit code for the human genetic code, and

are capturing the code for thousands of other species as well. [3] But reverse engineering the genome code—

understanding how it works—is a slow and laborious process that we are just beginning. As we do this, however, we

are learning the information‐processing basis of disease, maturation, and aging, and are gaining the means to correct and refine evolutionʹs unfinished invention.

In addition to evolutionʹs lack of documentation, it is also a very inefficient programmer. Most of the code—97

percent according to current estimates—does not compute; that is, most of the sequences do not produce proteins and

appear to be useless. That means that the active part of the code is only about 23 megabytes, which is less than the code for Microsoft Word. The code is also replete with redundancies. For example, an apparently meaningless sequence called Alu, comprising 300 nucleotide letters, occurs 300,000 times in the human genome, representing more

than 3 percent of our genetic program.

The theory of evolution states that programming changes are introduced essentially at random. The changes are

evaluated for retention by survival of the entire organism and its ability to reproduce. Yet the genetic program controls not just the one characteristic being ʺexperimentedʺ with, but millions of other features as well, Survival of the fittest appears to be a crude technique capable of concentrating on one or at most a few characteristics at a time.

Since the vast majority of changes make things worse, it may seem surprising that this technique works at all.

This contrasts with the conventional human approach to computer programming in which changes are designed

with a purpose in mind, multiple changes may be introduced at a time, and the changes made are tested by focusing

in on each change, rather than by overall survival of the program. If we attempted to improve our computer programs the way that evolution apparently improves its design, our programs would collapse from increasing randomness.

It is remarkable that by concentrating on one refinement at a time, such elaborate structures as the human eye could have been designed. Some observers have postulated that such intricate design is impossible through the incremental‐refinement method that evolution uses. A design as intricate as the eye or the heart would appear to require a design methodology in which it was designed all at once.

However, the fact that designs such as the eye have many interacting aspects does not rule out its creation through a design path comprising one small refinement at a time. In utero, the human fetus appears to go through a

process of evolution, although whether this is a corollary of the phases of evolution that led to our subspecies is not universally accepted. Nonetheless, most medical students learn that ontogeny (fetal development) recapitulates phylogeny (evolution of a genetically related group of organisms, such as a phylum). We appear to start out in the womb with similarities to a fish embryo, progress to an amphibian, then a mammal, and so on. Regardless of the phylogeny controversy, we can see in the history of evolution the intermediate design drafts that evolution went through in designing apparently ʺcompleteʺ mechanisms such as the human eye. Even though evolution focuses on

just one issue at a time, it is indeed capable of creating striking designs with many interacting parts.

There is a disadvantage, however, to evolutionʹs incremental method of design: It canʹt easily perform complete redesigns. It is stuck, for example, with the very slow computing speed of the mammalian neuron. But there is a way

around this, as we will explore in chapter 6, ʺBuilding New Brains.ʺ

The Evolution of Evolution

There are also certain ways in which evolution has evolved its own means for evolution. The DNA‐based coding itself

is clearly one such means. Within the code, other means have developed. Certain design elements, such as the shape

of the eye, are coded in a way that makes mutations less likely. The error detection and correction mechanisms built into the DNA‐based coding make changes in these regions very unlikely. This enforcement of design integrity for certain critical features evolved because they provide an advantage—changes to these characteristics are usually catastrophic. Other design elements, such as the number and layout of light‐sensitive rods and cones in the retina, have fewer design enforcements built into the code. If we examine the evolutionary record, we do see more recent change in the layout of the retina than in the shape of the eyeball itself. So in certain ways, the strategies of evolution have evolved. The Law of Accelerating Returns says that it should, for evolving its own strategies is the primary way that an evolutionary process builds on itself.

By simulating evolution, we can also confirm the ability of evolutionʹs ʺone step at a timeʺ design process to build ingenious designs of many interacting elements. One example is a software simulation of the evolution of life‐forms

called Network Tierra designed by Thomas Ray, a biologist and rain forest expert. [4] Rayʹs creaturesʺ are software simulations of organisms in which each ʺcellʺ has its own DNA‐like genetic code. The organisms compete with each

other for the limited simulated space and energy resources of their simulated environment.

A unique aspect of this artificial world is that the creatures have free rein of 150 computers on the Internet, like ʺislands in an archipelagoʺ according to Ray. One of the goals of this research is to understand how the explosion of diverse body plans that occurred on Earth during the Cambrian period some 570 million years ago was possible. ʺTo

watch evolution unfold is a thrill,ʺ Ray exclaimed as he watched his creatures evolve from unspecialized single‐celled organisms to multicellular organisms with at least modest increases in diversity. Ray has reportedly identified the equivalent of parasites, immunities, and crude social interaction. One of the acknowledged limitations in Rayʹs simulation is a lack of complexity in his simulated environment. One insight of this research is the need for a suitably chaotic environment as a key resource needed to push evolution along, a resource in ample supply in the real world.

A practical application of evolution is the area of evolutionary algorithms, in which millions of evolving computer

programs compete with one another in a simulated evolutionary process, thereby harnessing the inherent intelligence

of evolution to solve real‐world problems. Since the intelligence of evolution is weak, we focus and amplify it the same way a lens concentrates the sparse rays of the sun. Weʹll talk more about this powerful approach to software design in chapter 4, ʺA New Form of Intelligence on Earth.ʺ

The Intelligence Quotient of Evolution

Let us first praise evolution. It has created a plethora of designs of indescribable beauty, complexity, and elegance, not to mention effectiveness. Indeed, some theories of aesthetics define beauty as the degree of success in emulating the natural beauty that evolution has created. It created human beings with their intelligent human brains, beings smart enough to create their own intelligent technology.

Its intelligence seems vast. Or is it? It has one deficiency—evolution is very slow. While it is true that it has created some remarkable designs, it has taken an extremely long period of time to do so. It took eons for the process to get started and, for the evolution of life‐forms, eons meant billions of years. Our human forebears also took eons to get started in their creation of technology, but for us eons meant only tens of thousands of years, a distinct improvement.

Is the length of time required to solve a problem or create an intelligent design relevant to an evaluation of intelligence?

The authors of our human intelligence‐quotient tests seem to think so, which is why most IQ tests are timed. We

regard solving a problem in a few seconds better than solving it in a few hours or years. Periodically, the timed aspect of IQ tests gives rise to controversy, but it shouldnʹt. The speed of an intelligent process is a valid aspect of its evaluation. If a large, hunched, catlike animal perched on a tree limb suddenly appears out of my left cornea, designing an evasive tactic in a second or two is preferable to pondering the challenge for a few hours. If your boss asks you to design a marketing program, she probably doesnʹt want to wait a hundred years. Viking Penguin wanted

this book delivered before the end of the second, not the third, millennium. [5]

Evolution has achieved an extraordinary record of design, yet has taken an extraordinarily long period of time to

do so. If we factor its achievements by its ponderous pace, I believe we need to conclude that its intelligence quotient is only infinitesimally greater than zero. An IQ of only slightly greater than zero (defining truly arbitrary behavior as zero) is enough for evolution to beat entropy and create wonderful designs, given enough time, in the same way that

an ever so slight asymmetry in the balance between matter and antimatter was enough to allow matter to almost completely overtake its antithesis.

Evolution is thereby only a quantum smarter than completely unintelligent behavior. The reason that our human‐

created evolutionary algorithms are effective is that we speed up time a million‐ or billionfold, so as to concentrate and focus its otherwise diffuse power. in contrast, humans are a lot smarter than just a quantum greater than total stupidity (of course, your view may vary depending on the latest news reports).

THE LIFE CYCLE OF A TECHNOLOGY

What does the Law of Time and Chaos say about the end of the Universe? One theory is that the Universe

will continue its expansion forever. Alternatively, if there's enough stuff, then the force of the Universe's own

gravity will stop the expansion, resulting in a final "big crunch". Unless, of course, there's an antigravity force.

Or if the "cosmological constant" Einstein's "fudge factor," is big enough. I've had to rewrite this paragraph three times over the past several months because the physicists can't make up their minds. The latest

speculation apparently favors indefinite expansion.

Personally, I prefer the idea of the Universe closing in again on itself as more aesthetically pleasing. That

would mean that the Universe would reverse its expansion and reach a singularity again. We can speculate

that it would again expand and contract in an endless cycle. Most things in the Universe seem to move in

cycles, so why not the Universe itself? The Universe could then be regarded as a tiny wave particle in some

other really big Universe. And that big Universe would itself be a vibrating particle in yet another even bigger

Universe. Conversely, the tiny wave particles in our Universe can each be regarded as little Universes with

each of their vibrations lasting fractions of a trillionth of a second in our Universe representing billions of years of expansion and contraction in that little Universe. And each particle in those little Universes could

be. . . okay, so I'm getting a little carried away.

How to Unsmash a Cup

Let's say the Universe reverses its expansion. The phase of contraction has the opposite characteristics of

the phase of expansion that we are now in. Clearly, chaos in the Universe will be decreasing as the Universe

gets smaller. I can see that this is the case by considering the endpoint, which is again a singularity with no

size, and therefore no disorder.

We regard time as moving in one direction because processes in time are not generally reversible. If we

smash a cup, we find it difficult to unsmash it. The reason for this has to do with the second law of thermodynamics. Since overall entropy may increase but can never decrease, time has directionality.

Smashing a cup increases randomness. Unsmashing the cup would violate the second law of thermodynamics.

Yet in the contracting phase of the Universe, chaos is decreasing, so we should regard time's direction as

reversed.

This reverses all processes in time, turning evolution into devolution. Time moves backward during the

second half of the Universe's time span. So if you smash a favorite cup, try to do it as we approach the midpoint of the Universe's time span. You should find the cup coming together again as we cross over into the

Universe's contracting phase of its time span.

Now if time is moving backward during this contracting phase, what we (living in the expanding phase of

the Universe) look forward to as the big crunch is actually a big bang to the creatures living (in reverse time)

during the contracting phase. Consider the perspective of these time-reversed creatures living in what we

regard as the contracting phase of the Universe. From their perspective, what we regard as the second phase

is actually their first phase, with time going in the reverse direction. So from their perspective, the Universe

during this phase is expanding, not contracting. Thus, if the "Universe will eventually contract" theory is correct, it would be proper to say that the Universe is bounded in time by two big bangs, with events flowing

in opposite directions in time from each big bang, meeting in the middle. Creatures living in both phases can

say that they are in the first half of the Universe's history, since both phases will appear to be the first half to creatures living in those phases. And in both halves of the time span of the Universe, the Law of Entropy, the

Law of Time and Chaos, and the Law of Accelerating Returns (as applied to evolution) all hold true, but with

time moving in opposite directions. [6]

The End of Time

And what if the Universe expands indefinitely? This would mean that the stars and galaxies will eventually

exhaust their energy, leaving a Universe of dead stars expanding forever. That would leave a big mess—lots

of randomness—and no meaningful order, so according to the Law of Time and Chaos, time would gradually

come to a halt. Consistently, if a dead Universe means that there will be no conscious beings to appreciate it,

then both the Quantum Mechanical and the Eastern subjective viewpoints appear to imply that the Universe

would cease to exist.

In my view, neither conclusion is quite right. At the end of this book, I'll share with you my perspective of

what happens at the end of the Universe. But don't look ahead.

Consider the sophistication of our creations over a period of only a few thousand years. Ultimately, our machines

will match and exceed human intelligence, no matter how one cares to define or measure this elusive term. Even if my time frames are off, few serious observers who have studied the issue claim that computers will never achieve and surpass human intelligence. Humans will have vastly beaten evolution, therefore, achieving in a matter of only thousands of years as much or more than evolution achieved in billions of years. So human intelligence, a product of evolution, is far more intelligent than its creator.

And so, too, will the intelligence that we are creating come to exceed the intelligence of its creator. That is not the case today. But as the rest of this book will argue, it will take place very soon—in evolutionary terms, or even in terms of human history—and within the lifetimes of most of the readers of this book. The Law of Accelerating Returns predicts it. And furthermore, it predicts that the progression in the capabilities of human‐created machines will only continue to accelerate. The human species creating intelligent technology is another example of evolutionʹs progress building on itself. Evolution created human intelligence. Now human intelligence is designing intelligent machines at a far faster pace, Yet another example will be when our intelligent technology takes control of the creation of yet more intelligent technology than itself.

‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐

NOW ON THIS TIME THING, WE START OUT AS A SINGLE CELL, RIGHT?

Thatʹs right.

AND THEN WE DEVELOP INTO SOMETHING RESEMBLING A FISH, THEN AN AMPHIBIAN, ULTIMATELY A

MAMMAL, AND SO ON—YOU KNOW ONTOGENY RECAPITULATES—

Phylogeny, yes.

SO THATʹS JUST LIKE EVOLUTION, RIGHT? WE GO THROUGH EVOLUTION IN OUR MOTHERʹS WOMB.

Yes, thatʹs the theory. The word phylogeny is derived from phylum . . .

BUT YOU SAID THAT IN EVOLUTION, TIME SPEEDS UP. YET IN AN ORGANISMʹS LIFE, TIME SLOWS DOWN.

Ah yes, a good catch, I can explain.

IʹM ALL EARS.

The Law of Time and Chaos states that, in a process the average time interval between salient events is proportional to the amount of chaos in the process. So we have to be careful to define precisely what constitutes the process. It is true that evolution started out with single cells. And we also start out as a single cell. Sounds similar, but from the perspective of the Law of Time and Chaos, itʹs not. We start out as just one cell. When evolution was at the point of single cells, it was not one cell, but many trillions of cells. And these cells were just swirling about; thatʹs a lot of chaos and not much order. The primary movement of evolution has been toward greater order. In the development of an

organism, however, the primary movement is toward greater chaos—the grown organism has far greater disorder than the single cell it started out as. It draws that chaos from the environment as its cells multiply, and as it has encounters with its environment. Is that clear?

UH, SURE. BUT DONʹT QUIZ ME ON IT. I THINK THE GREATEST CHAOS IN MY LIFE WAS WHEN I LEFT

HOME TO GO TO COLLEGE. THINGS ARE JUST BEGINNING TO SETTLE DOWN NOW AGAIN.

I never said the Law of Time and Chaos explains everything.

OKAY, BUT EXPLAIN THIS. YOU SAID THAT EVOLUTION WASNʹT VERY SMART, OR AT LEAST WAS RATHER

SLOW‐WITTED. BUT ARENʹT SOME OF THESE VIRUSES AND BACTERIA USING EVOLUTION TO OUTSMART

US?

Evolution operates on different timescales. If we speed it up, it can be smarter than us. Thatʹs the idea behind software programs that apply a simulated evolutionary process to solving complex problems. Pathogen evolution is

another example of the ability of evolution to amplify and focus its diffuse powers. After all, a viral generation can take place in minutes or hours compared to decades for the human race. However, I do think we will ultimately prevail against the evolutionary tactics of our disease agents.

IT WOULD BE HELPFUL IF WE STOPPED OVERUSING ANTIBIOTICS.

Yes, and that brings up another issue, which is whether the human species is more intelligent than its individual members.

AS A SPECIES, WEʹRE CERTAINLY PRETTY SELF‐DESTRUCTIVE.

Thatʹs often true. Nonetheless, we do have a profound species‐wide dialogue going on. In other species, the individuals may communicate in a small clan or colony, but there is little, if any, sharing of information beyond that, and little apparent accumulated knowledge. The human knowledge base of science, technology, art, culture, and history has no parallel in any other species.

WHAT ABOUT WHALE SONGS?

Hmmm. I guess we just donʹt know what theyʹre singing about.

AND WHAT ABOUT THOSE APES THAT YOU CAN TALK TO ON THE INTERNET?

Well, on April 27, 1998, Koko the gorilla did engage in what her mentor, Francine Patterson, called the first interspecies chat, on America Online. [8] But Kokoʹs critics intimate that Patterson is the brains behind Koko.

BUT PEOPLE WERE ABLE TO CHAT WITH KOKO ONLINE.

Yes. However, Koko is rusty on her typing skills, so questions were interpreted by Patterson into American Sign Language, which Koko observed, and then Kokoʹs signed responses were interpreted by Patterson back into typed responses. I guess the suspicion is that Patterson is like those language interpreters from the diplomatic corps—one wonders if youʹre communicating with the dignitary, in this case Koko, or the interpreter.

ISNʹT IT CLEAR IN GENERAL THAT THE APES ARE COMMUNICATING? THEYʹRE NOT THAT DIFFERENT

FROM US GENETICALLY, AS YOU SAID.

Thereʹs clearly some form of communication going on. The question being addressed by the linguistics community is

whether the apes can really deal with the levels of symbolism embodied in human language. I think that Dr. Emily

Savage‐Rumbaugh of Georgia State University, who runs a fifty‐five‐acre ape‐communication laboratory, made a fair

statement recently when she said, ʺThey. [her critics] are asking Kanzi [one of her ape subjects] to do everything that humans do, which is specious. Heʹll never do that. It still doesnʹt negate what he can do.ʺ

WELL, IʹM ROOTING FOR THE APES.

Yes, it would be nice to have someone to talk to when we get tired of other humans.

SO WHY DONʹT YOU JUST HAVE A LITTLE TALK WITH YOUR COMPUTER?

I do talk to my computer, and it dutifully takes down what I say to it. And I can give commands by speaking in natural language to Microsoft Word, [9] but itʹs still not a very engaging conversationalist. Remember, computers are still a million times simpler than the human brain, so itʹs going to be a couple of decades yet before they become comforting companions.

BACK ON THIS INDIVIDUAL‐VERSUS‐GROUP‐INTELLIGENCE ISSUE, ARENʹT MOST ACHIEVEMENTS IN ART

AND SCIENCE ACCOMPLISHED BY INDIVIDUALS? YOU KNOW, YOU CANʹT WRITE A SONG OR PAINT A

PICTURE BY COMMITTEE.

Actually, a lot of important science and technology is done in large groups.

BUT ARENʹT THE REAL BREAKTHROUGHS DONE BY INDIVIDUALS?

In many cases, thatʹs true. Even then, the critics and the technology conservatives, even the intolerant ones, do play an important screening role. Not every new and different idea is worth pursuing. Itʹs worthwhile having some barriers

to break through.

Overall, the human enterprise is clearly capable of achievements that go far beyond what we can do as individuals.

HOW ABOUT THE INTELLIGENCE OF A LYNCH MOB?

I suppose a group is not always more intelligent than its members.

WELL, I HOPE THOSE TWENTY‐FIRST‐CENTURY MACHINES DONʹT EXHIBIT OUR MOB PSYCHOLOGY.

Good point.

I MEAN, I WOULDNʹT WANT TO END UP IN A DARK ALLEY WITH A BAND OF UNRULY MACHINES.

We should keep that in mind as we design our future machines. Iʹll make a little note . . .

YES, PARTICULARLY BEFORE THE MACHINES START, AS YOU SAID, DESIGNING THEMSELVES.

‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐

C H A P T E R T H R E E

OF MIND AND MACHINES

PHILOSOPHICAL MIND EXPERIMENTS

ʺI am lonely and bored; please keep me company.ʺ

If your computer displayed this message on its screen, would that convince you that your notebook is conscious

and has feelings?

Well, clearly no, itʹs rather trivial for a program to display such a message. The message actually comes from the

presumably human author of the program that includes the message. The computer is just a conduit for the message,

like a book or a fortune cookie.

Suppose we add speech synthesis to the program and have the computer speak its plaintive message. Have we changed anything?

While we have added technical complexity to the program, and some humanlike communication means, we still

do not regard the computer as the genuine author of the message.

Suppose now that the message is not explicitly programmed, but is produced by a game‐playing program that contains a complex model of its own situation. The specific message may never have been foreseen by the human creators of the program. It is created by the computer from the state of its own internal model as it interacts with you, the user. Are we getting closer to considering the computer as a conscious, feeling entity?

Maybe just a tad. But if we consider contemporary game software, the illusion is probably short‐lived as we gradually figure out the methods and limitations behind the computerʹs ability for small talk.

Now suppose the mechanisms behind the message grow to become a massive neural net, built from silicon but based on a reverse engineering of the human brain. Suppose we develop a learning protocol for this neural net that

enables it to learn human language and model human knowledge. Its circuits are a million times faster than human

neurons, so it has plenty of time to read all human literature and develop its own conceptions of reality, Its creators do not tell it how to respond to the world. Suppose now that it says, ʺIʹm lonely . . .ʺ

At what point do we consider the computer to be a conscious agent with its own free will? These have been the

most vexing problems in philosophy since the Platonic dialogues illuminated the inherent contradictions in our conception of these terms.

Letʹs consider the slippery slope from the opposite direction. Our friend Jack (circa some time in the twenty‐first

century) has been complaining of difficulty with his hearing. A diagnostic test indicates he needs more than a conventional hearing aid, so he gets a cochlear implant. Once used only by people with severe hearing impairments,

these implants are now common to correct the ability of people to hear across the entire sonic spectrum. This routine surgical procedure is successful, and Jack is pleased with his improved hearing.

Is he still the same person?

Well, sure he is. People have cochlear implants circa 1999. We still regard them as the same person.

Now (back to circa sometime in the twenty‐first century), Jack is so impressed with the success of his cochlear implants that he elects to switch on the built‐in phonic‐cognition circuits, which improve overall auditory perception.

These circuits are already built in so that he does not require another insertion procedure should he subsequently decide to enable them. By activating these neural‐replacement circuits, the phonics‐detection nets built into the implant bypass his own aging neural‐phonics regions. His cash account is also debited for the use of this additional neural software. Again, Jack is pleased with his improved ability to understand what people are saying.

Do we still have the same Jack? Of course; no one gives it a second thought.

Jack is now sold on the benefits of the emerging neural‐implant technology. His retinas are still working well, so

he keeps them intact (although he does have permanently implanted retinal‐imaging displays in his corneas to view

virtual reality), but he decides to try out the newly introduced image‐processing implants, and is amazed how much

more vivid and rapid his visual perception has become.

Still Jack? Why, sure.

Jack notices that his memory is not what it was, as he struggles to recall names, the details of earlier events, and so on. So heʹs back for memory implants. These are amazing‐memories that had grown fuzzy with time are now as clear

as if they had just happened. He also struggles with some unintended consequences as he encounters unpleasant memories that he would have preferred to remain dim.

Still the same Jack? Clearly he has changed in some ways and his friends are impressed with his improved faculties. But he has the same self‐deprecating humor, the same silly grin—yes, itʹs still the same guy.

So why stop here? Ultimately Jack will have the option of scanning his entire brain and neural system (which is

not entirely located in the skull) and replacing it with electronic circuits of far greater capacity, speed, and reliability.

Thereʹs also the benefit of keeping a backup copy in case anything happened to the physical Jack.

Certainly this specter is unnerving, perhaps more frightening than appealing. And undoubtedly it will be controversial for a long time (although according to the Law of Accelerating Returns, a ʺlong timeʺ is not as long as it used to be). Ultimately, the overwhelming benefits of replacing unreliable neural circuits with improved ones will be too compelling to ignore.

Have we lost Jack somewhere along the line? Jackʹs friends think not. Jack also claims that heʹs the same old guy,

just newer. His hearing, vision, memory, and reasoning ability have all improved, but itʹs still the same Jack.

However, letʹs examine the process a little more carefully Suppose rather than implementing this change a step at

a time as in the above scenario, Jack does it all at once. He goes in for a complete brain scan and has the information from the scan instantiated (installed) in an electronic neural computer. Not one to do things piecemeal, he upgrades his body as well. Does making the transition at one time change anything? Well, whatʹs the difference between changing from neural circuits to electronic/photonic ones all at once, as opposed to doing it gradually? Even if he makes the change in one quick step, the new Jack is still the same old Jack, right?

But what about Jackʹs old brain and body? Assuming a noninvasive scan, these still exist. This is Jack! Whether the

scanned information is subsequently used to instantiate a copy of Jack does not change the fact that the original Jack still exists and is relatively unchanged. Jack may not even be aware of whether or not a new Jack is ever created. And for that matter, we can create more than one new Jack.

If the procedure involves destroying the old Jack once we have conducted some quality‐assurance steps to make

sure the new Jack is fully functional, does that not constitute the murder (or suicide) of Jack?

Suppose the original scan of Jack is not noninvasive, that it is a ʺdestructiveʺ scan. Note that technologically speaking, a destructive scan is much easier—in fact we have the technology today (1999) to destructively scan frozen neural sections, ascertain the interneuronal wiring, and reverse engineer the neuronsʹ parallel digital‐analog algorithms. [1] We donʹt yet have the bandwidth to do this quickly enough to scan anything but a very small portion

of the brain. But the same speed issue existed for another scanning project—the human genome scan—when the project began. At the speed that researchers were able to scan and sequence the human genetic code in 1991, it would have taken thousands of years to complete the project. Yet a fourteen‐year schedule was set, which it now appears will be successfully realized. The Human Genome Project deadline obviously made the (correct) assumption that the

speed of our methods for sequencing DNA codes would greatly accelerate over time. The same phenomenon will hold true for our human‐brain‐scanning projects. We can do it now—very slowly—but that speed, like most everything else governed by the Law of Accelerating Returnsʹ will get exponentially faster in the years ahead.

Now suppose as we destructively scan Jack, we simultaneously install this information into the new Jack. We can

consider this a process of ʺtransferringʺ Jack to his new brain and body. So one might say that Jack is not destroyed, just transferred into a more suitable embodiment. But is this not equivalent to scanning Jack noninvasively, subsequently instantiating the new Jack and then destroying the old Jack? if that sequence of steps basically amounts to killing the old Jack, then this process of transferring Jack in a single step must amount to the same thing. Thus we can argue that any process of transferring Jack amounts to the old Jack committing suicide, and that the new Jack is not the same person.

The concept of scanning and reinstantiation of the information is familiar to us from the fictional ʺbeam me upʺ

teleportation technology of Star Trek. In this fictional show, the scan and reconstitution is presumably on a nanoengineering scale, that is, particle by particle, rather than just reconstituting the salient algorithms of neural-information processing envisioned above. But the concept is very similar. Therefore, it can be argued that the Star Trek characters are committing suicide each time they teleport, with new characters being created. These new characters, while essentially identical, are made up of entirely different particles, unless we imagine that it is the actual particles being beamed to the new destination. Probably it would be easier to beam just the information and use local particles to instantiate the new embodiments. Should it matter? Is consciousness a function of the actual particles or just of their pattern and organization?

We argue that consciousness and identity are not a function of the specific particles at all, because our own particles are constantly changing. On a cellular basis, we change most of our cells (although not our brain cells) over a period of several years. [2] On an atomic level, the change is much faster than that, and does include our brain cells.

We are not at all permanent collections of particles. It is the patterns of matter and energy that are semipermanent (that is, changing only gradually), but our actual material content is changing constantly, and very quickly. We are rather like the patterns that water makes in a stream. The rushing water around a formation of rocks makes a particular, unique pattern. This pattern may remain relatively unchanged for hours, even years. Of course, the actual material constituting the pattern—the water—is totally replaced within milliseconds. This argues that we should not

associate our fundamental identity with specific sets of particles, but rather the pattern of matter and energy that we represent. This, then, would argue that we should consider the new Jack to be the same as the old Jack because the

pattern is the same. (One might quibble that while the new Jack has similar functionality to the old Jack, he is not identical. However, this just dodges the essential question, because we can reframe the scenario with a nanoengineering technology that copies Jack atom by atom rather than just copying his salient information-processing algorithms.)

Contemporary philosophers seem to be partial to the ʺidentity from patternʺ argument. And given that our pattern changes only slowly in comparison to our particles, there is some apparent merit to this view. But the counter to that argument is the ʺold Jackʺ waiting to be extinguished after his ʺpatternʺ has been scanned and installed in a new computing medium. Old Jack may suddenly realize that the ʺidentity from patternʺ argument is flawed.

MIND AS MACHINE VERSUS MIND BEYOND MACHINE

Science cannot solve the ultimate mystery of nature because in the last analysis we are part of the mystery we are trying to solve.

—Max Planck

Is all what we see or seem, but a dream within a dream?

—Edgar Allan Poe

What if everything is an illusion and nothing exists? in that case, I definitely overpaid for my carpet.

—Woody Allen

The Difference Between Objective and Subjective Experience

Can we explain the experience of diving into a lake to someone who has never been immersed in water? How about

the rapture of sex to someone who has never had erotic feelings (assuming one could find such a person)? Can we explain the emotions evoked by music to someone congenitally deaf? A deaf person will certainly learn a lot about music: watching people sway to its rhythm, reading about its history and role in the world. But none of this is the same as experiencing a Chopin prelude.

If I view light with a wavelength of 0.000075 centimeters, I see red. Change the wavelength to 0.000035 centimeters

and I see violet. The same colors can also be produced by mixing colored lights. If red and green lights are properly combined, I see yellow. Mixing pigments works differently from changing wavelengths, however, because pigments

subtract colors rather than add them. Human perception of color is more complicated than mere detection of electro‐

magnetic frequencies, and we still do not fully understand it. Yet even if we had a fully satisfactory theory of our mental process, it would not convey the subjective experience of redness, or yellowness. I find language inadequate

for expressing my experience of redness. Perhaps I can muster some poetic reflections about it, but unless youʹve had the same encounter, it is really not possible for me to share my experience.

So how do I know that you experience the same thing when you talk about redness? Perhaps you experience red

the way I experience blue, and vice versa. How can we test our assumptions that we experience these qualities the same way? Indeed, we do know there are some differences. Since I have what is misleadingly labeled ʺred‐green color‐blindness, there are shades of color that appear identical to me that appear different to others. Those of you without this disability apparently have a different experience than I do. What are you all experiencing? Iʹll never know.

Giant squids are wondrous sociable creatures with eyes similar in structure to humans (which is surprising, given

their very different phylogeny) and possessing a complex nervous system. A few fortunate human scientists have developed relationships with these clever cephalopods. So what is it like to be a giant squid? When we see it respond to danger and express behavior that reminds us of a human emotion, we infer an experience that we are familiar with.

But what of their experiences without a human counterpart?

Or do they have experiences at all? Maybe they are just like ʺmachinesʺ—responding programmatically to stimuli

in their environment. Maybe there is no one home. Some humans are of this view—only humans are conscious; animals just respond to the world by ʺinstinct,ʺ that is, like a machine. To many other humans, this author included, it seems apparent that at least the more evolved animals are conscious creatures, based on empathetic perceptions of animals expressing emotions that we recognize as correlates of human reactions. Yet even this is a human‐centric way of thinking in that it only recognizes subjective experiences with a human equivalent. Opinion on animal consciousness is far from unanimous. Indeed, it is the question of consciousness that underlies the issue of animal rights. Animal rights disputes about whether or not certain animals are suffering in certain situations result from our general inability to experience or measure the subjective experience of another entity. [3]

The not uncommon view of animals being ʺjust machinesʺ is disparaging to both animals and machines. Machines

today are still a million times simpler than the human brain. Their complexity and subtlety today is comparable to that of insects. There is relatively little speculation on the subjective experience of insects, although again, there is no convincing way to measure this. But the disparity in the capabilities of machines and the more advanced animals, such as the Homo sapiens sapiens subspecies, will be short‐lived. The unrelenting advance of machine intelligence, which we will visit in the next several chapters, will bring machines to human levels of intricacy and refinement and beyond within several decades. Will these machines be conscious?

And what about free will‐will machines of human complexity make their own decisions, or will they just follow a

program, albeit a very complex one? Is there a distinction to be made here?

The issue of consciousness lurks behind other vexing issues. Take the question of abortion. Is a fertilized egg cell a conscious human being? How about a fetus one day before birth?

Itʹs hard to say that a fertilized egg is conscious or that a full‐term fetus is not. Pro‐choice and pro‐life activists are afraid of the slippery slope in between these two definable ledges. And the slope is genuinely slippery—a human fetus develops a brain quickly, but itʹs not immediately recognizable as a human brain. The brain of a fetus becomes more humanlike gradually. The slope has no ridges to stand on. Admittedly, other hard‐to‐define questions such as

human dignity come into the debate, but fundamentally, the contention concerns sentience. In other words, when do

we have a conscious entity?

Some severe forms of epilepsy have been successfully treated by surgical removal of the impaired half of the brain. This drastic surgery needs to be done during childhood before the brain has fully matured. Either half of the brain can be removed, and if the operation is successful the child will grow up somewhat normally. Does this imply

that both halves of the brain have their own consciousness? Perhaps there are two of us in each intact brain who hopefully get along with each other. Maybe there is a whole panoply of consciousnesses lurking in one brain each with a somewhat different perspective. Is there a consciousness that is aware of mental processes that we consider unconscious?

I could go on for a long time with such conundrums. And indeed, people have been thinking about these quandaries for a long time. Plato, for one, was preoccupied with these issues. In the Phaedo, The Republic, and Theaetetus, Plato expresses the profound paradox inherent in the concept of consciousness and a humanʹs apparent ability to freely choose. On the one hand, human beings partake of the natural world and are subject to its laws. Our brains are natural phenomena and thus must follow the cause‐and‐effect laws manifest in machines and other lifeless

creations of our species. Plato was familiar with the potential complexity of machines and their ability to emulate elaborate logical processes. On the other hand, cause‐and‐effect mechanics, no matter how complex, should not, according to Plato, give rise to self‐awareness or consciousness. Plato first attempts to resolve this conflict in his theory of the Forms: Consciousness is not an attribute of the mechanics of thinking, but rather the ultimate reality of human existence. Our consciousness, or ʺsoul,ʺ is immutable and unchangeable. Thus, our mental interaction with the

physical world is on the level of the ʺmechanicsʺ of our complicated thinking process. The soul stands aloof.

But no, this doesnʹt really work, Plato realizes. If the soul is unchanging, then it cannot learn or partake in reason, because it would need to change to absorb and respond to experience. Plato ends up dissatisfied with positing consciousness in either place: the rational processes of the natural world or the mystical level of the ideal Form of the self or soul. [4]

The concept of free will reflects an even deeper paradox. Free will is purposeful behavior and decision making.

Plato believed in a ʺcorpuscular physicsʺ based on fixed and determined rules of cause and effect. But if human decision making is based on such predictable interactions of basic particles, our decisions must also be predetermined. That would contradict human freedom to choose. The addition of randomness into the natural laws is

a possibility, but it does not solve the problem. Randomness would eliminate the predetermination of decisions and

actions, but it contradicts the purposefulness of free will, as there is nothing purposeful in randomness.

Okay, letʹs put free will in the soul. No, that doesnʹt work either. Separating free will from the rational cause‐and-effect mechanics of the natural world would require putting reason and learning into the soul as well, for otherwise the soul would not have the means to make meaningful decisions. Now the soul is itself becoming a complex machine, which contradicts its mystical simplicity.

Perhaps this is why Plato wrote dialogues. That way he could passionately express both sides of these contradictory positions. I am sympathetic to Platoʹs dilemma: None of the obvious positions is really sufficient. A deeper truth can be perceived only by illuminating the opposing sides of a paradox.

Plato was certainly not the last thinker to ponder these questions. We can identify several schools of thought on

these subjects, none of them very satisfactory.

The ʺConsciousness is Just a Machine Reflecting on Itselfʺ School

A common approach is to deny the issue exists: Consciousness and free will are just illusions induced by the ambiguities of language. A slight variation is that consciousness is not exactly an illusion, but just another logical process. It is a process responding and reacting to itself. We can build that in a machine: just build a procedure that has a model of itself, and that examines and responds to its own methods. Allow the process to reflect on itself. There now you have consciousness. It is a set of abilities that evolved because self‐reflective ways of thinking are inherently more powerful.

The difficulty with arguing against the ʺconsciousness is just a machine reflecting on itselfʺ school is that this perspective is self‐consistent. But this viewpoint ignores the subjective viewpoint. It can deal with a personʹs reporting of subjective experience, and it can relate reports of subjective experiences not on to outward behavior but to patterns of neural firings as well. And if I think about it, my knowledge of the subjective experience of anyone aside from myself is no different (to me) than the rest of my objective knowledge. I donʹt experience other peopleʹs subjective experiences; I just hear about them. So the only subjective experience this school of thought ignores is my own (that is, after all, what the term subjective experience means). And, hey, Iʹm only one person among billions of humans, trillions of potentially conscious organisms; all of whom, with just one exception, are not me.

But the failure to explain my subjective experience is a serious one. It does not explain the distinction between 0.000075 centimeter electromagnetic radiation and my experience of redness. I could learn how color perception works, how the human brain processes light, how it processes combinations of light, even what patterns of neural firing this all provokes, but it still fails to explain the essence of my experience.

The Logical Positivists [5]

I am doing my best to express what I am talking about here but unfortunately the issue is not entirely effable. D. J.

Chalmers describes the mystery of the experienced inner life as the ʺhard problemʺ of consciousness, to distinguish this issue from the ʺeasy problemʺ of how the brain works. [6] Marvin Minsky observed that ʺthereʹs something queer

about describing consciousness: Whatever people mean to say, they just canʹt seem to make it clear .ʺ That is precisely the problem, says the ʺconsciousness is just a machine reflecting on itselfʺ school—to speak of consciousness other than as a pattern of neural firings is to wander off into a mystical realm beyond any hope of verification.

This objective view is sometimes referred to as logical positivism, a philosophy codified by Ludwig Wittgenstein

in his Tractatus Logico‐Philosophicus. [7] To the logical positivists, the only things worth talking about are our direct sensory experiences, and the logical inferences that we can make therefrom. Everything else ʺwe must pass over in silence,ʺ to quote Wittgensteinʹs last statement in his treatise.

Yet Wittgenstein did not practice what he preached. Published in 1953, two years after his death, his Philosophical Investigations defined those matters worth contemplating as precisely those issues he had earlier argued should be passed over in silence. [8] Apparently he came to the view that the antecedents of his last statement in the Tractatus—

what we cannot speak about—are the only real phenomena worth reflecting upon. The late Wittgenstein heavily influenced the existentialists, representing perhaps the first time since Plato that a major philosopher was successful in illuminating such contradictory views.

I Think, Therefore I Am

The early Wittgenstein and the logical positivists that he inspired are often thought to have their roots in the philosophical investigations of René Descartes. [9] Descartesʹs famous dictum ʺI think, therefore I amʺ has often been cited as emblematic of Western rationalism. This view interprets Descartes to mean ʺI think, that is, I can manipulate logic and symbols, therefore I am worthwhile.ʺ But in my view, Descartes was not intending to extol the virtues of rational thought. He was troubled by what has become known as the mind‐body problem, the paradox of how mind

can arise from nonmind, how thoughts and feelings can arise to its limits, from the ordinary matter of the brain.

Pushing rational skepticism his statement really means ʺI think, that is, there is an undeniable mental phenomenon,

some awareness, occurring, therefore all we know for sure is that something—letʹs call it I—exists.ʺ Viewed in this way, there is less of a gap than is commonly thought between Descartes and Buddhist notions of consciousness as the

primary reality.

Before 2030, we will have machines proclaiming Descartesʹs dictum. And it wonʹt seem like a programmed response. The machines will be earnest and convincing. Should we believe them when they claim to be conscious entities with their own volition?

The ʺConsciousness Is a Different Kind of Stuffʺ School

The issue of consciousness and free will has been, of course, a major preoccupation of religious thought. Here we encounter a panoply of phenomena, ranging from the elegance of Buddhist notions of consciousness to ornate pantheons of souls, angels, and gods. In a similar category are theories by contemporary philosophers that regard consciousness as yet another fundamental phenomenon in the world, like basic particles and forces. I call this the ʺconsciousness is a different kind of stuffʺ school. To the extent that this school implies an interference by consciousness in the physical world that runs afoul of scientific experiment, science is bound to win because of its ability to verify its insights. To the extent that this view stays aloof from the material world, it often creates a level of complex mysticism that cannot be verified and is subject to disagreement. To the extent that it keeps its mysticism simple, it offers limited objective insight, although subjective insight is another matter (I do have to admit a fondness for simple mysticism).

The ʺWeʹre Too Stupidʺ School

Another approach is to declare that human beings just arenʹt capable of understanding the answer. Artificial intelligence researcher Douglas Hofstadter muses that ʺit could be simply an accident of fate that our brains are too weak to understand themselves. Think of the lowly giraffe, for instance, whose brain is obviously far below the level required for self‐understanding—yet it is remarkably similar to our brain.ʺ [10] But to my knowledge, giraffes are not known to ask these questions (of course, we donʹt know what they spend their time wondering about). In my view, if

we are sophisticated enough to ask the questions, then we are advanced enough to understand the answers.

However, the ʺweʹre too stupidʺ school points out that indeed we are having difficulty clearly formulating these questions.

A Synthesis of Views

My own view is that all of these schools are correct when viewed together, but insufficient when viewed one at a time. That is, the truth lies in a synthesis of these views. This reflects my Unitarian religious education in which we studied all the worldʹs religions, considering them ʺmany paths to the truth.ʺ Of course, my view may be regarded as the worst one of all. On its face, my view is contradictory and makes little sense. The other schools at least can claim some level of consistency and coherence.

Thinking Is as Thinking Does

Oh yes, there is one other view, which I call the ʺthinking is as thinking doesʺ school. In a 1950 paper, Alan Turing describes his concept of the Turing Test, in which a human judge interviews both a computer and one or more human

foils using terminals (so that the judge wonʹt be prejudiced against the computer for lacking a warm and fuzzy appearance). [11] If the human judge is unable to reliably unmask the computer (as an impostor human) then the computer wins. The test is often described as a kind of computer IQ test, a means of determining if computers have

achieved a human level of intelligence. In my view, however, Turing really intended his Turing Test as a test of thinking, a term he uses to imply more than just clever manipulation of logic and language. To Turing, thinking implies conscious intentionality.

Turing had an implicit understanding of the exponential growth of computing power, and predicted that a computer would pass his eponymous exam by the end of the century. He remarked that by that time ʺthe use of words and general educated opinion will have altered so much that one will be able to speak of machines thinking

without expecting to be contradicted.ʺ His prediction was overly optimistic in terms of time frame, but in my view not by much.

In the end, Turingʹs prediction foreshadows how the issue of computer thought will be resolved. The machines will convince us that they are conscious, that they have their own agenda worthy of our respect. We will come to believe that they are conscious much as we believe that of each other. More so than with our animal friends, we will empathize with their professed feelings and struggles because their minds will be based on the design of human thinking. They will embody human qualities and will claim to be human. And weʹll believe them.

THE VIEW FROM QUANTUM MECHANICS

I often dream about failing. Such dreams are commonplace to the ambitious or those who

climb mountains. Lately I dreamed I was clutching at the face of a rock, but it would not

hold. Gravel gave way. I grasped for a shrub, but it pulled loose, and in cold terror I fell

into the abyss. Suddenly I realized that my fall was relative; there was no bottom and no

end. A feeling of pleasure overcame me. I realized that what I embody, the principle of

life, cannot be destroyed. It is written into the cosmic code, the order of the universe. As

I continued to fall in the dark void, embraced by the vault of the heavens, I sang to the

beauty of the stars and made my peace with the darkness.

― Heinz Pagels, physicist and quantum mechanics researcher before his death in a

1988 climbing accident

The Western objective view states that after billions of years of swirling around, matter and energy evolved to create life-forms—complex self-replicating patterns of matter and energy—that became sufficiently advanced

to reflect on their own existence, on the nature of matter and energy, on their own consciousness. In

contrast, the Eastern subjective view states that consciousness came first—matter and energy are merely the

complex thoughts of conscious beings, ideas that have no reality without a thinker.

As noted above, the objective and subjective views of reality have been at odds since the dawn of recorded

history. There is often merit, however, in combining seemingly irreconcilable views to achieve a deeper

understanding. Such was the case with the adoption of quantum mechanics fifty years ago. Rather than

reconcile the views that electromagnetic radiation (for example, light) was either a stream of particles (that

is, photons) or a vibration (that is, light waves), both views were fused into an irreducible duality. While this

idea is impossible to grasp using only our intuitive models of nature, we are unable to explain the world

without accepting this apparent contradiction. Other paradoxes of quantum mechanics (for example, electron

"tunneling" in which electrons in a transistor appear on both sides of a barrier) helped create the age of computation, and may unleash a new revolution in the form of the quantum computer, [12] but more about

that later.

Once we accept such a paradox, wonderful things happen. In postulating the duality of light, quantum

mechanics has discovered an essential nexus between matter and consciousness. Particles apparently do not

make up their minds as to which way they are going or even where they have been until they are forced to do

so by the observations of a conscious observer. We might say that they appear not really to exist at all

retroactively until and unless we notice them.

So twentieth-century Western science has come around to the Eastern view. The Universe is sufficiently

sublime that the essentially Western objective view of consciousness arising from matter and the essentially

Eastern subjective view of matter arising from consciousness apparently coexist as another irreducible duality.

Clearly, consciousness, matter, and energy are inextricably linked.

We may note here a similarity of quantum mechanics to the computer simulation of a virtual world. In

today's software games that display images of a virtual world, the portions of the environment not currently

being interacted with by the user (that is, those off screen) are usually not computed in detail, if at all. The

limited resources of the computer are directed toward rendering the portion of the world that the user is

currently viewing. As the user focuses in on some other aspect, the computational resources are then

immediately directed toward creating and displaying that new perspective. It thus seems as if the portions of

the virtual world that are offscreen are nonetheless still "there" but the software designers figure there is no point wasting valuable computer cycles on regions of their simulated world that no one is watching.

I would say that quantum theory implies a similar efficiency in the physical world. Particles appear not to

decide where the have been until forced to do so by being observed. The implication is that portions of the

world we live in are not actually "rendered" until some conscious observer turns her attention toward them.

After all, there's no point wasting valuable "computes" of the celestial computer that renders our Universe.

This gives new meaning to the question about the unheard tree that falls in the forest.

‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐

ON THIS MULTIPLE‐CONSCIOUSNESS IDEA, WOULDNʹT I NOTICE THAT—I MEAN IF I HAD DECIDED TO DO

ONE THING AND THIS OTHER CONSCIOUSNESS IN MY HEAD WENT AHEAD AND DECIDED SOMETHING

ELSE?

I thought you had decided not to finish that muffin you just devoured.

TOUCHE. OKAY, IS THAT AN EXAMPLE OF WHAT YOUʹRE TALKING ABOUT?

It is a better example of Marvin Minskyʹs Society of Mind, in which he conceives of our mind as a society of other minds some like muffins, some are vain, some are health conscious, some make resolutions, others break them. Each

of these in turn is made up of other societies. At the bottom of this hierarchy are little mechanisms Minsky calls agents with little or no intelligence. It is a compelling vision of the organization of intelligence, including such phenomena as mixed emotions and conflicting values.

SOUNDS LIKE A GREAT LEGAL DEFENSE. ʺNO, JUDGE, IT WASNʹT ME. IT WAS THIS OTHER GAL IN MY

HEAD WHO DID THE DEED!ʺ

Thatʹs not going to do you much good if the judge decides to lock up the other gal in your head.

THEN HOPEFULLY THE WHOLE SOCIETY IN MY HEAD WILL STAY OUT OF TROUBLE. BUT WHICH MINDS

IN MY SOCIETY OF MIND ARE CONSCIOUS?

We could imagine that each of these minds in the society of mind is conscious, albeit that the lowest‐ranking ones have relatively little to be conscious of. Or perhaps consciousness is reserved for the higher‐ranking minds. Or perhaps only certain combinations of higher‐ranking minds are conscious, whereas others are not. Or perhaps—

NOW WAIT A SECOND, HOW CAN WE TELL WHAT THE ANSWER IS?

I believe thereʹs really no way to tell. What possible experiment can we run that would conclusively prove whether an entity or process is conscious? If the entity says, ʺHey, Iʹm really conscious,ʺ does that settle the matter? If the entity is very compelling when it expresses a professed emotion, is that definitive? if we look carefully at its internal methods and see feedback loops in which the process examines and responds to itself, does that mean itʹs conscious? If we see certain types of patterns in its neural firings, is that convincing. Contemporary philosophers such as Daniel Dermett appear to believe that the consciousness of an entity is a testable and measurable attribute. But I think science is inherently about objective reality. I donʹt see how it can break through to the subjective level.

MAYBE IF THE THING PASSES THE TURING TEST?

That is what Turing had in mind. Lacking any conceivable way of building a consciousness detector, he settled on a

practical approach, one that emphasizes our unique human proclivity for language. And I do think that Turing is right in a way—if a machine can pass a valid Turing Test, I believe that we will believe that it is conscious. Of course, thatʹs still not a scientific demonstration.

The converse proposition, however, is not compelling. Whales and elephants have bigger brains than we do and

exhibit a wide range of behaviors that knowledgeable observers consider intelligent. I regard them as conscious creatures, but they are in no position to pass the Turing Test.

THEY WOULD HAVE TROUBLE TYPING ON THESE SMALL KEYS OF MY COMPUTER.

Indeed, they have no fingers. They are also not proficient in human languages. The Turing Test is clearly a human‐

centric measurement.

IS THERE A RELATIONSHIP BETWEEN THIS CONSCIOUSNESS STUFF AND THE ISSUE OF TIME THAT WE

SPOKE ABOUT EARLIER?

Yes, we clearly have an awareness of time. Our subjective experience of time passage—and remember that subjective

is just another word for conscious—is governed by the speed of our objective processes. If we change this speed by

altering our computational substrate, we affect our perception of time.

RUN THAT BY ME AGAIN.

Letʹs take an example. If I scan your brain and nervous system with a suitably advanced noninvasive‐scanning technology of the early twenty‐first century—a very‐high‐resolution, high‐bandwidth magnetic resonance imaging, perhaps—ascertain all the salient information processes and then download that information to my suitably advanced

neural computer, Iʹll have a little you or at least someone very much like you right here in my personal computer.

If my personal computer is a neural net of simulated neurons made of electronic stuff rather than human stuff, the

version of you in my computer will run about a million times faster. So an hour for me would be a million hours for

you, which is about a century.

OH, THATʹS GREAT, YOUʹLL DUMP ME IN YOUR PERSONAL COMPUTER, AND THEN FORGET ABOUT ME

FOR A SUBJECTIVE MILLENNIUM OR TWO.

Weʹll have to be careful about that, wonʹt we.

‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐

C H A P T E R F O U R

A NEW FORM OF

INTELLIGENCE ON EARTH

THE ARTIFICIAL INTELLIGENCE MOVEMENT

What if these theories are really true, and we were magically shrunk and put into someoneʹs brain while he was thinking. We would see all the pumps, pistons, gears and levers working away, and we would be able to describe their workings completely, in mechanical terms, thereby completely describing the thought processes of the brain. But that description would nowhere contain any mention of thought! it would contain nothing but descriptions of pumps, pistons, levers!

—Gottfried Wilhelm Leibniz

Artificial stupidity (AS) may be defined as the attempt by computer scientists to create computer programs capable of causing problems of a type normally associated with human thought.

—Wallace Marshal

Artificial intelligence (AI) is the science of how to get machines to do the things they do in the movies.

—Astro Teller

The Ballad of Charles and Ada

Returning to the evolution of intelligent machines, we find Charles Babbage sitting in the rooms of the Analytical Society at Cambridge, England, in 1821, with a table of logarithms lying before him.

ʺWell, Babbage, what are you dreaming about?ʺ asked another member, seeing Babbage half asleep.

ʺI am thinking that all these tables might be calculated by machinery!ʺ Babbage replied.

From that moment on, Babbage devoted most of his waking hours to an unprecedented vision: the worldʹs first

programmable computer. Although based entirely on the mechanical technology of the nineteenth century, Babbageʹs

ʺAnalytical Engineʺ was a remarkable foreshadowing of the modern computer. [1]

Babbage developed a liaison with the beautiful Ada Lovelace, the only legitimate child of Lord Byron, the poet.

She became as obsessed with the project as Babbage, and contributed many of the ideas for programming the

machine, including the invention of the programming loop and the subroutine. She was the worldʹs first software

engineer, indeed the only software engineer prior to the twentieth century.

Lovelace significantly extended Babbageʹs ideas and wrote a paper on programming techniques, sample

programs, and the potential of this technology to emulate intelligent human activities. She describes the speculations of Babbage and herself on the capacity of the Analytical Engine, and future machines like it, to play chess and

compose music. She finally concludes that although the computations of the Analytical Engine could not properly be

regarded as ʺthinking,ʺ they could nonetheless perform activities that would otherwise require the extensive

application of human thought.

The story of Babbage and Lovelace ends tragically. She died a painful death from cancer at the age of thirty‐six,

leaving Babbage alone again to pursue his quest. Despite his ingenious constructions and exhaustive effort, the

Analytical Engine was never completed. Near the end of his existence he remarked that he had never had a happy

day in his life. Only a few mourners were recorded at Babbageʹs funeral in 1871. [2]

What did survive were Babbageʹs ideas. The first American programmable computer, the Mark 1, completed in

1944 by Howard Aiken of Harvard University and IBM, borrowed heavily from Babbageʹs architecture. Aiken

commented, ʺIf Babbage had lived seventy‐five years later, I would have been out of a job.ʺ [3] Babbage and Lovelace were innovators nearly a century ahead of their time. Despite Babbageʹs inability to finish any of his major initiatives, their concepts of a computer with a stored program, self‐modifying code, addressable memory, conditional

branching, and computer programming itself still form the basis of computers today. [4]

Again, Enter Alan Turing

By 1940, Hitler had the mainland of Europe in his grasp, and England was preparing for an anticipated invasion. The

British government organized its best mathematicians and electrical engineers, under the intellectual leadership of Alan Turing, with the mission of cracking the German military code. It was recognized that with the German air force enjoying superiority in the skies, failure to accomplish this mission was likely to doom the nation. In order not to be distracted from their task, the group lived in the tranquil pastures of Hertfordshire, England.

Turing and his colleagues constructed the worldʹs first operational computer from telephone relays and named it

Robinson, [5] after a popular cartoonist who drew ʺRube Goldbergʺ machines (very ornate machinery with many interacting mechanisms). The groupʹs own Rube Goldberg succeeded brilliantly and provided the British with a transcription of nearly all significant Nazi messages. As the Germans added to the complexity of their code (by adding additional coding wheels to their Enigma coding machine), Turing replaced Robinsonʹs electro‐magnetic intelligence with an electronic version called Colossus built from two thousand radio tubes. Colossus and nine similar machines running in parallel provided an uninterrupted decoding of vital military intelligence to the Allied war effort.

Use of this information required supreme acts of discipline on the part of the British government. Cities that were

to be bombed by Nazi aircraft were not forewarned, lest preparations arouse German suspicions that their code had

been cracked. The information provided by Robinson and Colossus was used only with the greatest discretion, but the cracking of Enigma was enough to enable the Royal Air Force to win the Battle of Britain.

Thus fueled by the exigencies of war, and drawing upon a diversity of intellectual traditions, a new form of intelligence emerged on Earth.

The Birth of Artificial Intelligence

The similarity of the computational process to the human thinking process was not lost on Turing. In addition to having established much of the theoretical foundations of computation and having invented the first operational computer, he was instrumental in the early efforts to apply this new technology to the emulation of intelligence.

In his classic 1950 paper, Computing Machinery and Intelligence, Turing described an agenda that would in fact occupy the next half century of advanced computer research: game playing, decision making, natural language understanding, translation, theorem proving, and, of course, encryption and the cracking of codes. [6] He wrote (with his friend David Champernowne) the first chess‐playing program.

As a person, Turing was unconventional and extremely sensitive. He had a wide range of unusual interests, from

the violin to morphogenesis (the differentiation of cells). There were public reports of his homosexuality, which greatly disturbed him, and he died at the age of forty‐one, a suspected suicide.

The Hard Things Were Easy

In the 1950s, progress came so rapidly that some of the early pioneers felt that mastering the functionality of the human brain might not be so difficult after all. In 1956, AI researchers Allen Newell, J. C. Shaw, and Herbert Simon created a program called Logic Theorist (and in 1957 a later version called General Problem Solver), which used recursive search techniques to solve problems in mathematics. [7] Recursion, as we will see later in this chapter, is a powerful method of defining a solution in terms of itself. Logic Theorist and General Problem Solver were able to find proofs for many of the key theorems in Bertrand Russell and Alfred North Whiteheadʹs seminal work on set theory,

Principia Mathematica, [8] including a completely original proof for an important theorem that had never been previously solved. These early successes led Simon and Newell to say in a 1958 paper, entitled Heuristic Problem Solving: The Next Advance in Operations Research, ʺThere are now in the world machines that think, that learn and that create. Moreover, their ability to do these things is going to increase rapidly until—in a visible future—the range of problems they can handle will be coextensive with the range to which the human mind has been applied.ʺ [9] The paper goes on to predict that within ten years (that is, by 1968) a digital computer would be the world chess champion. A decade later, an unrepentant Simon predicts that by 1985, ʺmachines will be capable of doing any work

that a man can do.ʺ Perhaps Simon was intending a favorable comment on the capabilities of women, but these predictions, decidedly more optimistic than Turingʹs, embarrassed the nascent AI field.

The field has been inhibited by this embarrassment to this day, and AI researchers have been reticent in their prognostications ever since. In 1997, when Deep Blue defeated Gary Kasparov, then the reigning human world chess