The Brain in the Crystal

Another approach contemplates growing a computer as a crystal directly in three dimensions, with computing elements being the size of large molecules within the crystalline lattice. This is another approach to harnessing the third dimension.

Stanford Professor Lambertus Hesselink has described a system in which data is stored in a crystal as a hologram—an optical interference pattern.ʺ This three‐dimensional storage method requires only a million atoms for

each bit and thus could achieve a trillion bits of storage for each cubic centimeter. Other projects hope to harness the regular molecular structure of crystals as actual computing elements.

The Nanotube: A Variation of Buckyballs

Three professors—Richard Smalley and Robert Curl of Rice University, and Harold Kroto of the University of Sussex—shared the 1996 Nobel Prize in Chemistry for their 1985 discovery of soccer‐ball‐shaped molecules formed of

a large number of carbon atoms. Organized in hexagonal and pentagonal patterns like R. Buckminster Fullerʹs building designs, they were dubbed ʺbuckyballs.ʺ These unusual molecules, which form naturally in the hot fumes of

a furnace, are extremely strong—a hundred times stronger than steel—a property they share with Fullerʹs architectural innovations. [12]

More recently, Dr. Sumio Iijima of Nippon Electric Company showed that in addition to the spherical buckyballs,

the vapor from carbon arc lamps also contained elongated carbon molecules that looked like long tubes. [13] Called

nanotubes because of their extremely small size—fifty thousand of them side by side would equal the thickness of one human hair—they are formed of the same pentagonal patterns of carbon atoms as buckyballs and share the buckyballʹs unusual strength.

What is most remarkable about the nanotube is that it can perform the electronic functions of silicon‐based components. If a nanotube is straight, it conducts electricity as well as or better than a metal conductor. If a slight helical twist is introduced, the nanotube begins to act like a transistor. The full range of electronic devices can be built using nanotubes.

Since a nanotube is essentially a sheet of graphite that is only one atom thick, it is vastly smaller than the silicon transistors on an integrated chip. Although extremely small, they are far more durable than silicon devices. Moreover, they handle heat much better than silicon and thus can be assembled into three‐dimensional arrays more easily than

silicon transistors. Dr. Alex Zettl, a physics professor at the University of California at Berkeley, envisions three-dimensional arrays of nanotube‐based computing elements similar to—but far denser and faster than—the human brain.

QUANTUM COMPUTING: THE UNIVERSE IN A CUP

Quantum particles are the dreams that stuff is made of.

—David Moser

So far we have been talking about mere digital computing. There is actually a more powerful approach called quantum computing. It promises the ability to solve problems that even massively parallel digital computers cannot

solve. Quantum computers harness a paradoxical result of quantum mechanics. Actually, I am being redundant—all

results of quantum mechanics are paradoxical.

Note that the Law of Accelerating Returns and other projections in this book do not rely on quantum computing.

The projections in this book are based on readily measurable trends and are not relying on discontinuities in technological progress that nonetheless occurred in the twentieth century. There will inevitably be technological discontinuities in the twenty‐first century, and quantum computing would certainly qualify.

What is quantum computing? Digital computing is based on ʺbitsʺ of information which are either off or on—zero

or one. Bits are organized into larger structures such as numbers, letters, and words, which in turn can represent virtually any form of information: text, sounds, pictures, moving images. Quantum computing, on the other hand, is

based on qu‐bits (pronounced cue‐bits), which essentially are zero and one at the same time. The qu‐bit is based on the fundamental ambiguity inherent in quantum mechanics. The position, momentum, or other state of a fundamental particle remains ʺambiguousʺ until a process of disambiguation causes that particle to ʺdecideʺ where it is, where it has been, and what properties it has. For example, consider a stream of photons that strike a sheet of glass at a 45-degree angle. As each photon strikes the glass, it has a choice of traveling either straight through the glass or reflecting off the glass. Each photon will actually take both paths (actually more than this, see below) until a process of conscious observation forces each particle to decide which path it took. This behavior has been extensively confirmed in numerous contemporary experiments.

In a quantum computer, the qu‐bits would be represented by a property—nuclear spin is a popular choice—of individual electrons. If set up in the proper way, the electrons will not have decided the direction of their nuclear spin (up or down) and thus will be in both states at the same time. The process of conscious observation of the electronsʹ

spin states—or any subsequent phenomena dependent on a determination of thee states—causes the ambiguity to be

resolved. This process of disambiguation is called quantum decoherence. If it werenʹt for quantum decoherence, the

world we live in would be a baffling place indeed.

The key to the quantum computer is that we would present it with a problem, along with a way to test the answer.

We would set up the quantum decoherence of the qu‐bits in such a way that only an answer that passes the test survives the decoherence. The failing answers essentially cancel each other out. As with a number of other approaches (for example, recursive and genetic algorithms), one of the keys to quantum computing is, therefore, a careful statement of the problem, including a precise way to test possible answers.

The series of qu‐bits represents simultaneously every possible solution to the problem. A single qu‐bit represents

two possible solutions. Two linked qu‐bits represent four possible answers. A quantum computer with 1,000 qu‐bits

represents 21,000 (this is approximately equal to a decimal number consisting of 1, followed by 301 zeroes) possible solutions simultaneously. The statement of the problem—expressed as a test to be applied to potential answers—is presented to the string of qu‐bits so that the qu‐bits decohere (that is, each qu‐bit changes from its ambiguous 0‐1

state to an actual 0 or a 1), leaving a series of 0ʹs and 1ʹs that pass the test. Essentially all 21,000 possible solutions have been tried simultaneously, leaving only the correct solution.

This process of reading out the answer through quantum decoherence is obviously the key to quantum

computing. It is also the most difficult aspect to grasp. Consider the following analogy. Beginning physics students learn that if light strikes a mirror at an angle, it will bounce off the mirror in the opposite direction and at the same angle to the surface. But according to quantum theory, that is not what is happening. Each photon actually bounces

off every possible point on the mirror, essentially trying out every possible path. The vast majority of these paths cancel each other out, leaving only the path that classical physics predicts. Think of the mirror as representing a problem to be solved. Only the correct solution—light bounced off at an angle equal to the incoming angle—survives

all of the quantum cancellations. A quantum computer works the same way. The test of the correctness of the answer

to the problem is set up in such a way that the vast majority of the possible answers—those that do not pass the test—

cancel each other out, leaving only the sequence of bits that does pass the test. An ordinary mirror, therefore, can be thought of as a special example of a quantum computer, albeit one that solves a rather simple problem.

As a more useful example, encryption codes are based on factoring large numbers (factoring means determining

which smaller numbers, when multiplied together, result in the larger number). Factoring a number with several hundred bits is virtually impossible on any digital computer even if we had billions of years to wait for the answer. A quantum computer can try every possible combination of factors simultaneously and break the code in less than a billionth of a second (communicating the answer to human observers does take a bit longer). The test applied by the

quantum computer during its key disambiguation stage is very simple: just multiply one factor by the other and if the result equals the encryption code, then we have solved the problem.

It has been said that quantum computing is to digital computing as a hydrogen bomb is to a firecracker. This is a

remarkable statement when we consider that digital computing is quite revolutionary in its own right. The analogy is based on the following observation. Consider (at least in theory) a Universe‐sized (nonquantum) computer in which

every neutron, electron, and proton in the Universe is turned into a computer, and each one (that is, every particle in the Universe) is able to compute trillions of calculations per second. Now imagine certain problems that this Universe‐sized supercomputer would be unable to solve even if we ran that computer until either the next big bang

or until all the stars in the Universe died—about ten to thirty billion years. There are many examples of such massively intractable problems; for example, cracking encryption codes that use a thousand bits, or solving the traveling‐salesman problem with a thousand cities. While very massive digital computing (including our theoretical

Universe‐sized computer) is unable to solve this class of problems, a quantum computer of microscopic size could solve such problems in less than a billionth of a second.

Are quantum computers feasible? Recent advances, both theoretical and practical, suggest that the answer is yes.

Although a practical quantum computer has not been built, the means for harnessing the requisite decoherence has

been demonstrated. Isaac Chuang of Los Alamos National Laboratory and MITʹs Neil Gershenfeld have actually built

a quantum computer using the carbon atoms in the alanine molecule. Their quantum computer was only able to add

one and one, but thatʹs a start. We have, of course, been relying on practical applications of other quantum effects, such as the electron tunneling in transistors, for decades. [14]

A Quantum Computer in a Cup of Coffee

One of the difficulties in designing a practical quantum computer is that it needs to be extremely small, basically atom or molecule sized, to harness the delicate quantum effects. But it is very difficult to keep individual atoms and molecules from moving around due to thermal effects. Moreover, individual molecules are generally too unstable to

build a reliable machine. For these problems, Chuang and Gershenfeld have come up with a theoretical breakthrough.

Their solution is to take a cup of liquid and consider every molecule to be a quantum computer. Now instead of a single unstable molecule‐sized quantum computer, they have a cup with about a hundred billion trillion quantum computers. The point here is not more massive parallelism, but rather massive redundancy In this way, the inevitably erratic behavior of some of the molecules his no effect on the statistical behavior of all the molecules in the liquid.

This approach of using the statistical behavior of trillions of molecules to overcome the lack of reliability of a single molecule is similar to Professor Adlemanʹs use of trillions of DNA strands to overcome the comparable issue in DNA

computing.

This approach to quantum computing also solves the problem of reading out the answer bit by bit without causing those qu‐bits that have not yet been read to decohere prematurely. Chuang and Gershenfeld subject their liquid computer to radio‐wave pulses, which cause the molecules to respond with signals indicating the spin state of each electron. Each pulse does cause some unwanted decoherence, but, again, this decoherence does not affect the statistical behavior of trillions of molecules. In this way, the quantum effects become stable and reliable.

Chuang and Gershenfeld are currently building a quantum computer that can factor small numbers. Although this early model will not compete with conventional digital computers, it will be an important demonstration of the

feasibility of quantum computing. Apparently high on their list for a suitable quantum liquid is freshly brewed Java coffee, which, Gershenfeld notes, has ʺunusually even heating characteristics.ʺ

Quantum Computing with the Code of Life

Quantum computing starts to overtake digital computing when we can link at least 40 qu‐bits. A 40‐qu‐bit quantum

computer would be evaluating a trillion possible solutions simultaneously, which would match the fastest supercomputers. At 60 bits, we would be doing a million trillion simultaneous trials. When we get to hundreds of qu-bits, the capabilities of a quantum computer would vastly overpower any conceivable digital computer.

So hereʹs my idea. The power of a quantum computer depends on the number of qu‐bits that we can link together.

We need to find a large molecule that is specifically designed to hold large amounts of information. Evolution has designed just such a molecule: DNA. We can readily create any sized DNA molecule we wish from a few dozen nucleotide rungs to thousands. So once again we combine two elegant ideas—in this case the liquid‐DNA computer

and the liquid‐quantum computer—to come up with a solution greater than the sum of its parts. By putting trillions

of DNA molecules in a cup, there is the potential to build a highly redundant—and therefore reliable—quantum computer with as many qu‐bits as we care to harness. Remember you read it here first.

Suppose No One Ever Looks at the Answer

Consider that the quantum ambiguity a quantum computer relies on is decohered, that is, disambiguated, when a conscious entity observes the ambiguous phenomenon. The conscious entities in this case are us, the users of the quantum computer. But in using a quantum computer, we are not directly looking at the nuclear spin states of individual electrons. The spin states are measured by an apparatus that in turn answers some question that the quantum computer has been asked to solve. These measurements are then processed by other electronic gadgets, manipulated further by conventional computing equipment, and finally displayed or printed on a piece of paper.

Suppose no human or other conscious entity ever looks at the printout. In this situation, there has been no conscious observation, and therefore no decoherence. As I discussed earlier, the physical world only bothers to manifest itself in an unambiguous state when one of us conscious entities decides to interact with it. So the page with the answer is ambiguous, undetermined—until and unless a conscious entity looks at it. Then instantly all the ambiguity is retroactively resolved, and the answer is there on the page. The implication is that the answer is not there until we look at it. But donʹt try to sneak up on the page fast enough to see the answerless page; the quantum effects are instantaneous.

What Is It Good For?

A key requirement for quantum computing is a way to test the answer. Such a test does not always exist. However, a

quantum computer would be a great mathematician. It could simultaneously consider every possible combination of

axioms and previously solved theorems (within a quantum computerʹs qu‐bit capacity) to prove or disprove virtually

any provable or disprovable conjecture. Although a mathematical proof is often extremely difficult to come up with,

confirming its validity is usually straightforward, so the quantum approach is well suited.

Quantum computing is not directly applicable, however, to problems such as playing a board game. Whereas the

ʺperfectʺ chess move for a given board is a good example of a finite but intractable computing problem, there is no

easy way to test the answer. If a person or process were to present an answer, there is no way to test its validity other than to build the same move‐countermove tree that generated the answer in the first place. Even for mere ʺgoodʺ

moves, a quantum computer would have no obvious advantage over a digital computer.

How about creating art? Here a quantum computer would have considerable value. Creating a work of art involves solving a series, possibly an extensive series, of problems. A quantum computer could consider every possible combination of elements—words, notes, strokes—for each decision. We still need a way to test each answer

to the sequence of aesthetic problems, but the quantum computer would be ideal for instantly searching through a Universe of possibilities.

Encryption Destroyed and Resurrected

As mentioned above, the classic problem that a quantum computer is ideally suited for is cracking encryption codes,

which relies on factoring large numbers. The strength of an encryption code is measured by the number of bits that

needs to be factored. For example, it is illegal in the United States to export encryption technology using more than 40

bits (56 bits if you give a key to law‐enforcement authorities). A 40‐bit encryption method is not very secure. In September 1997, Ian Goldberg, a University of California at Berkeley graduate student, was able to crack a 40‐bit code in three and a half hours using a network of 250 small computers. [15] A 56‐bit code is a bit better (16 bits better, actually). Ten months later, John Gilmore, a computer privacy activist, and Paul Kocher, an encryption expert, were

able to break the 56‐bit code in 56 hours using a specially designed computer that cost them $250,000 to build. But a quantum computer can easily factor any sized number (within its capacity). Quantum computing technology would

essentially destroy digital encryption.

But as technology takes away, it also gives. A related quantum effect can provide a new method of encryption that

can never be broken. Again, keep in mind that, in view of the Law of Accelerating Returns, ʺneverʺ is not as long as it used to be.

This effect is called quantum entanglement. Einstein, who was not a fan of quantum mechanics, had a different name for it, calling it ʺspooky action at a distance.ʺ The phenomenon was recently demonstrated by Dr. Nicolas Gisin of the University of Geneva in a recent experiment across the city of Geneva. [16] Dr. Gisin sent twin photons in opposite directions through optical fibers. Once the photons were about seven miles apart, they each encountered a

glass plate from which they could either bounce off or pass through. Thus, they were each forced to make a decision

to choose among two equally probable pathways. Since there was no possible communication link between the two

photons, classical physics would predict that their decisions would be independent. But they both made the same decision. And they did so at the same instant in time, so even if there were an unknown communication path between

them, there was not enough time for a message to travel from one photon to the other at the speed of light. The two

particles were quantum entangled and communicated instantly with each other regardless of their separation. The effect was reliably repeated over many such photon pairs.

The apparent communication between the two photons takes place at a speed far greater than the speed of light.

In theory, the speed is infinite in that the decoherence of the two photon travel decisions, according to quantum theory, takes place at exactly the same instant. Dr. Gisinʹs experiment was sufficiently sensitive to demonstrate the communication was at least ten thousand times faster than the speed of light.

So, does this violate Einsteinʹs Special Theory of Relativity, which postulates the speed of light as the fastest speed at which we can transmit information? The answer is no—there is no information being communicated by the entangled photons. The decision of the photons is random—a profound quantum randomness—and randomness is precisely not information. Both the sender and the receiver of the message simultaneously access the identical random decisions of the entangled photons, which are used to encode and decode, respectively, the message. So we

are communicating randomness—not information—at speeds far greater than the speed of light. The only way we could convert the random decisions of the photons into information is if we edited the random sequence of photon

decisions. But editing this random sequence would require observing the photon decisions, which in turn would cause quantum decoherence, which would destroy the quantum entanglement. So Einsteinʹs theory is preserved.

Even though we cannot instantly transmit information using quantum entanglement, transmitting randomness is

still very useful. It allows us to resurrect the process of encryption that quantum computing would destroy. If the sender and receiver of a message are at the two ends of an optical fiber, they can use the precisely matched random

decisions of a stream of quantum entangled photons to respectively encode and decode a message. Since the encryption is fundamentally random and nonrepeating, it cannot be broken. Eavesdropping would also be

impossible, as this would cause quantum decoherence that could be detected at both ends. So privacy is preserved.

Note that in quantum encryption, we are transmitting the code instantly The actual message will arrive much more slowly—at only the speed of light.

Quantum Consciousness Revisited

The prospect of computers competing with the full range of human capabilities generates strong, often adverse feelings, as well as no shortage of arguments that such a specter is theoretically impossible. One of the more interesting such arguments comes from an Oxford mathematician and physicist, Roger Penrose.

In his 1989 best‐seller, The Emperorʹs New Mind, Penrose puts forth two conjectures. [17] The first has to do with an unsettling theorem proved by a Czech mathematician, Kurt Gödel. Gödelʹs famous ʺincompleteness theorem,ʺ which

has been called the most important theorem in mathematics, states that in a mathematical system powerful enough to

generate the natural numbers, there inevitably exist propositions that can be neither proved nor disproved. This was another one of those twentieth‐century insights that upset the orderliness of nineteenth‐century thinking.

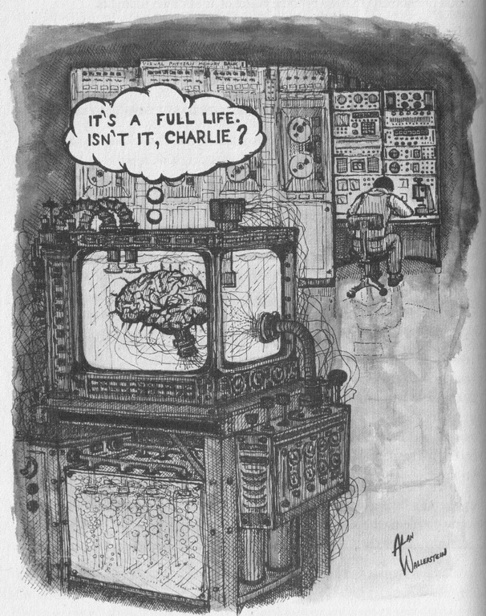

A corollary of Gödelʹs theorem is that there are mathematical propositions that cannot be decided by an algorithm.

In essence, these Gödelian impossible problems require an infinite number of steps to be solved. So Penroseʹs first conjecture is that machines cannot do what humans can do because machines can only follow an algorithm. An algorithm cannot solve a Gödelian unsolvable problem. But humans can. Therefore, humans are better.

Penrose goes on to state that humans can solve unsolvable problems because our brains do quantum computing.

Subsequently responding to criticism that neurons are too big to exhibit quantum effects, Penrose cited small structures in the neurons called microtubules that may be capable of quantum computation.

However, Penroseʹs first conjecture—that humans are inherently superior to machines—is unconvincing for at least three reasons:

1. It is true that machines canʹt solve Gödelian impossible problems. But humans canʹt solve them either. Humans

can only estimate them. Computers can make estimates as well, and in recent years are doing a better job of this

than humans.

2. In any event, quantum computing does not permit solving Gödelian impossible problems either. Solving a Gödelian impossible problem requires an algorithm with an infinite number of steps. Quantum computing can

turn an intractable problem that could not be solved on a conventional computer in trillions of years into an instantaneous computation. But it still falls short of infinite computing.

3. Even if (1) and (2) above were wrong, that is, if humans could solve Gödelian impossible problems and do so

because of their quantum‐computing ability, that still does not restrict quantum computing from machines. The

opposite is the case. If the human brain exhibits quantum computing, this would only confirm that quantum computing is possible, that matter following natural laws can perform quantum computing. Any mechanisms in

human neurons capable of quantum computing, such as the microtubules, would be replicable in a machine.

Machines use quantum effects—tunneling—in trillions of devices (that is, transistors) today. [18] There is nothing to suggest that the human brain has exclusive access to quantum computing.

Penroseʹs second conjecture is more difficult to resolve. It is that an entity exhibiting quantum computing is conscious. He is saying that it is the humans quantum computing that accounts for her consciousness. Thus quantum

computing—quantum decoherence—yields consciousness.

Now we do know that there is a link between consciousness and quantum decoherence. That is, consciousness observing a quantum uncertainty causes quantum decoherence. Penrose, however, is asserting a link in the opposite

direction. This does not follow logically. Of course quantum mechanics is not logical in the usual sense—it follows quantum logic (some observers use the word ʺstrangeʺ to describe quantum logic). But even applying quantum logic,

Penroseʹs second conjecture does not appear to follow. On the other hand, I am unable to reject it out of hand because there is a strong nexus between consciousness and quantum decoherence in that the former causes the latter. I have

thought about this issue for three years, and have been unable to accept it or reject it. Perhaps before writing my next book I will have an opinion on Penroseʹs second conjecture.

IS THE BRAIN BIG ENOUGH?

Is our conception of human neuron functioning and our estimates of the number of neurons and connections

in the human brain consistent with what we know about the brain's capabilities? Perhaps human neurons are

far more capable than we think they are. If so, building a machine with human-level capabilities might take

longer than expected.

We find that estimates of the number of concepts—"chunks" of knowledge—that a human expert in a

particular field has mastered are remarkably consistent: about 50,000 to 100,000. This approximate range

appears to be valid over a wide range of human endeavors: the number of board positions mastered by a

chess grand master, the concepts mastered by an expert in a technical field, such as a physician, the

vocabulary of a writer (Shakespeare used 29,000 words;[19] this book uses a lot fewer).

This type of professional knowledge is, of course, only a small subset of the knowledge we need to function

as human beings. Basic knowledge of the world, including so-called common sense, is more extensive. We

also have an ability to recognize patterns: spoken language, written language, objects, faces. And we have

our skills: walking, talking, catching balls. I believe that a reasonably conservative estimate of the general

knowledge of a typical human is a thousand times greater than the knowledge of an expert in her professional

field. This provides us a rough estimate of 100 million chunks—bits of understanding, concepts, patterns,

specific skills—per human. As we will see below, even if this estimate is low (by a factor of up to a thousand),

the brain is still big enough.

The number of neurons in the human brain is estimated at approximately 100 billion, with an average of

1,000 connections per neuron, for a total of 100 trillion connections. With 100 trillion connections and 100

million chunks of knowledge (including patterns and skills), we get an estimate of about a million connections

Our computer simulations of neural nets use a variety of different types of neuron models, all of which are

relatively simple. Efforts to provide detailed electronic models of real mammalian neurons appear to show that

while animal neurons are more complicated than typical computer models, the difference in complexity is

modest. Even using our simpler computer versions of neurons, we find that we can model a chunk of

knowledge—a face, a character shape, a phoneme, a word sense—using as little as a thousand connections

per chunk. Thus our rough estimate of a million neural connections in the human brain per human knowledge

chunk appears reasonable.

Indeed it appears ample. Thus we could make my estimate (of the number of knowledge chunks) a

thousand times greater, and the calculation still works. It is likely, however, that the brain's encoding of

knowledge is less efficient than the methods we use in our machines. This apparent inefficiency is consistent

with our understanding that the human brain is conservatively designed. The brain relies on a large degree of

redundancy and a relatively low density of information storage to gain reliability and to continue to function

effectively despite a high rate of neuron loss as we age.

My conclusion is that it does not appear that we need to contemplate a model of information processing of

individual neurons that is significantly more complex than we currently understand in order to explain human

capability. The brain is big enough.

REVERSE ENGINEERING A PROVEN DESIGN: THE HUMAN BRAIN

For many people the mind is the last refuge of mystery against the encroaching spread of science, and they donʹt like the idea of science engulfing the last bit of terra incognita.

—Herb Simon as quoted by Daniel Dennett

Cannot we let people be themselves, and enjoy life in their own way? You are trying to make another you. Oneʹs enough.

—Ralph Waldo Emerson

For the wise men of old . . . the solution has been knowledge and self‐discipline, . . . and in the practice of this technique, are ready to do things hitherto regarded as disgusting and impious—such as digging up and mutilating the dead.

—C. S. Lewis

Intelligence is: (a) the most complex phenomenon in the Universe; or (b) a profoundly simple process.

The answer, of course, is (c) both of the above. Itʹs another one of those great dualities that make life interesting.

Weʹve already talked about the simplicity of intelligence: simple paradigms and the simple process of computation.

Letʹs talk about the complexity.

We come back to knowledge, which starts out with simple seeds but ultimately becomes elaborate as the knowledge‐gathering process interacts with the chaotic real world. Indeed, that is how intelligence originated. It was the result of the evolutionary process we call natural selection, itself a simple paradigm, that drew its complexity from the pandemonium of its environment. We see the same phenomenon when we harness evolution in the computer. We start with simple formulas, add the simple process of evolutionary iteration and combine this with the

simplicity of massive computation. The result is often complex, capable, and intelligent algorithms.

But we donʹt need to simulate the entire evolution of the human brain in order to tap the intricate secrets it contains. just as a technology company will take apart and ʺreverse engineerʺ (analyze to understand the methods of) a rivalʹs products, we can do the same with the human brain. It is, after all, the best example we can get our hands on of an intelligent process. We can tap the architecture, organization, and innate knowledge of the human brain in order to greatly accelerate our understanding of how to design intelligence in a machine. By probing the brainʹs circuits, we can copy and imitate a proven design, one that took its original designer several billion years to develop. (And itʹs not even copyrighted.)

As we approach the computational ability to simulate the human brain—weʹre not there today but we will begin

to be in about a decadeʹs time—such an effort will be intensely pursued. Indeed, this endeavor has already begun.

For example, Synapticsʹ vision chip is fundamentally a copy of the neural organization, implemented in silicon of

course, of not only the human retina, but the early stages of mammalian visual processing. It has captured the essence of the algorithm of early mammalian visual processing, an algorithm called center surround filtering. It is not a particularly complicated chip, yet it realistically captures the essence of the initial stages of human vision.

There is a popular conceit among observers, both informed and uninformed, that such a reverse engineering project is infeasible. Hofstadter worries that ʺour brains may be too weak to understand themselves.ʺ [20] But that is not what we are finding. As we probe the brainʹs circuits, we find that the massively parallel algorithms are far from incomprehensible. Nor is there anything like an infinite number of them. There are hundreds of specialized regions in the brain, and it does have a rather ornate architecture, the consequence of its long history. The entire puzzle is not beyond our comprehension. It will certainly not be beyond the comprehension of twenty‐first‐century machines.

The knowledge is right there in front of us, or rather inside of us. It is not impossible to get at. Letʹs start with the most straightforward scenario, one that is essentially feasible today (at least to initiate).

We start by freezing a recently deceased brain.

Now, before I get too many indignant reactions, let me wrap myself in Leonardo da Vinciʹs cloak. Leonardo also

received a disturbed reaction from his contemporaries. Here was a guy who stole dead bodies from the morgue, carted them back to his dwelling, and then took them apart. This was before dissecting dead bodies was in style. He

did this in the name of knowledge, not a highly valued pursuit at the time. He wanted to learn how the human body

works, but his contemporaries found his activities bizarre and disrespectful. Today we have a different view, that expanding our knowledge of this wondrous machine is the most respectful homage we can pay. We cut up dead bodies all the time to learn more about how living bodies work, and to teach others what we have already learned.

Thereʹs no difference here in what I am suggesting. Except for one thing: I am talking about the brain, not the body. This strikes closer to home. We identify more with our brains than our bodies. Brain surgery is regarded as more invasive than toe surgery. Yet the value of the knowledge to be gained from probing the brain is too valuable to ignore. So weʹll get over whatever squeamishness remains.

As I was saying, we start by freezing a dead brain. This is not a new concept—Dr. E. Fuller Torrey a former supervisor at the National Institute of Mental Health and now head of the mental health branch of a private research foundation, has 44 freezers filled with 226 frozen brains. [21] Torrey and his associates hope to gain insight into the causes of schizophrenia, so all of his brains are of deceased schizophrenic patients, which is probably not ideal for our purposes.

We examine one brain layer—one very thin slice—at a time. With suitably sensitive two‐dimensional scanning equipment we should be able to see every neuron and every connection represented in each synapse‐thin layer. When

a layer has been examined and the requisite data stored, it can be scraped away to reveal the next slice. This information can be stored and assembled into a giant three‐dimensional model of the brainʹs wiring and neural topology.

It would be better if the frozen brains were not already dead long before freezing. A dead brain will reveal a lot

about living brains, but it is clearly not the ideal laboratory. Some of that deadness is bound to reflect itself in a deterioration of its neural structure. We probably donʹt want to base our designs for intelligent machines on dead brains. We are likely to be able to take advantage of people who, facing imminent death, will permit their brains to be destructively scanned just slightly before rather than slightly after their brains would have stopped functioning on their own. Recently, a condemned killer allowed his brain and body to be scanned and you can access all 10 billion

bytes of him on, the Internet at the Center for Human Simulationʹs ʺVisible Human Projectʺ web site. [22] Thereʹs an even higher resolution 25‐billion‐byte female companion on the site as well. Although the scan of this couple is not high enough resolution for the scenario envisioned here, itʹs an example of donating oneʹs brain for reverse engineering. Of course we may not want to base our templates of machine intelligence on the brain of a convicted killer, anyway.

Easier to talk about are the emerging noninvasive means of scanning our brains. I began with the more invasive

scenario above because it is technically much easier. We have in fact the means to conduct a destructive scan today

(although not yet the bandwidth to scan the entire brain in a reasonable amount of time). In terms of noninvasive scanning, high‐speed, high‐resolution magnetic resonance imaging (MRI) scanners are already able to view individual somas (neuron cell bodies) without disturbing the living tissue being scanned. More powerful MRIS are being developed that will be capable of scanning individual nerve fibers that are only ten microns (millionths of a meter) in diameter. These will be available during the first decade of the twenty‐first century. Eventually we will be able to scan the presynaptic vesicles that are the site of human learning.

We can peer inside someoneʹs brain today with MRI scanners, which are increasing their resolution with each new

generation of this technology. There are a number of technical challenges in accomplishing this, including achieving suitable resolution, bandwidth (that is, speed of transmission), lack of vibration, and safety. For a variety of reasons it is easier to scan the brain of someone recently deceased than of someone still living. (It is easier to get someone deceased to sit still, for one thing.) But noninvasively scanning a living brain will ultimately become feasible as MRI and other scanning technologies continue to improve in resolution and speed.

A new scanning technology called optical imaging, developed by Professor Amiram Grinvald at Israelʹs

Weizmarm Institute, is capable of significantly higher resolution than MRI. Like MRI, it is based on the interaction between electrical activity in the neurons and blood circulation in the capillaries feeding the neurons. Grinvaldʹs device is capable of resolving features smaller than fifty microns, and can operate in real time, thus enabling scientists to view the firing of individual neurons. Grinvald and researchers at Germanyʹs Max Planck Institute were struck by

the remarkable regularity of the patterns of neural firing when the brain was engaged in processing visual information. [23] One of the researchers, Dr. Mark Hubener, commented that ʺour maps of the working brain are so

orderly they resemble the street map of Manhattan rather than, say, of a medieval European town.ʺ Grinvald, Hubener, and their associates were able to use their brain scanner to distinguish between sets of neurons responsible for perception of depth, shape, and color. As these neurons interact with one another, the resulting pattern of neural firings resembles elaborately linked mosaics. From the scans, it was possible for the researchers to see how the neurons were feeding information to each other. For example, they noted that the depth perception neurons were arranged in parallel columns, providing information to the shape‐detecting neurons that formed more elaborate pinwheel‐like patterns. Currently, the Grinvald scanning technology is only able to image a thin slice of the brain near its surface, but the Weizmann Institute is working on refinements that will extend its three‐dimensional capability.

Grinvaldʹs scanning technology is also being used to boost the resolution of MRI scanning. A recent finding that near-infrared light can pass through the skull is also fueling excitement about the ability of optical imaging as a high-resolution method of brain scanning.

The driving force behind the rapidly improving capability of noninvasive scanning technologies such as MRI is again the Law of Accelerating Returns, because it requires massive computational ability. To build the high-resolution, three‐dimensional images from the raw magnetic resonance patterns that an MRI scanner produces. The

exponentially increasing computational ability provided by the Law of Accelerating Returns (and for another fifteen

to twenty years, Mooreʹs Law) will enable us to continue to rapidly improve the resolution and speed of these noninvasive scanning technologies.

Mapping the human brain synapse by synapse may seem like a daunting effort, but so did the Human Genome

Project, an effort to map all human genes, when it was launched in 1991. Although the bulk of the human genetic code has still not been decoded, there is confidence at the nine American Genome Sequencing Centers that the task

will be completed, if not by 2005, then at least within a few years of that target date. Recently, a new private venture with funding from Perkin‐Elmer has announced plans to sequence the entire human genome by the year 2001. As I

noted above, the pace of the human genome scan was extremely slow in its early years, and has picked up speed with

improved technology, particularly computer programs that identify the useful genetic information. The researchers are counting on further improvements in their gene‐hunting computer programs to meet their deadline. The same will be true of the human‐brain‐mapping project, as our methods of scanning and recording the 100 trillion neural connections pick up speed from the Law of Accelerating Returns.

What to Do with the Information

There are two scenarios for using the results of detailed brain scans. The most immediate— scanning the brain to understand it—is to scan portions of the brain to ascertain the architecture and implicit algorithms of interneuronal connections in different regions. The exact position of each and every nerve fiber is not as important as the overall pattern. With this information we can design simulated neural nets that operate similarly. This process will be rather like peeling an onion as each layer of human intelligence is revealed.

This is essentially what Synaptics has done in its chip that mimics mammalian neural‐image processing. This is also what Grinvald, Hubener, and their associates plan to do with their visual‐cortex scans. And there are dozens of other contemporary projects designed to scan portions of the brain and apply the resulting insights to the design of intelligent systems.

Within a region, the brainʹs circuitry is highly repetitive, so only a small portion of a region needs to be fully scanned. The computationally relevant activity of a neuron or group of neurons is sufficiently straightforward that we can understand and model these methods by examining them. Once the structure and topology of the neurons, the organization of the interneuronal wiring, and the sequence of neural firing in a region have been observed, recorded, and analyzed, it becomes feasible to reverse engineer that regionʹs parallel algorithms. After the algorithms of a region are understood, they can be refined and extended prior to being implemented in synthetic neural equivalents.

The methods can certainly be greatly sped up given that electronics is already more than a million times faster than neural circuitry.

We can combine the revealed algorithms with the methods for building intelligent machines that we already understand. We can also discard aspects of human computing that may not be useful in a machine. Of course, weʹll

have to be careful that we donʹt throw the baby out with the bathwater.

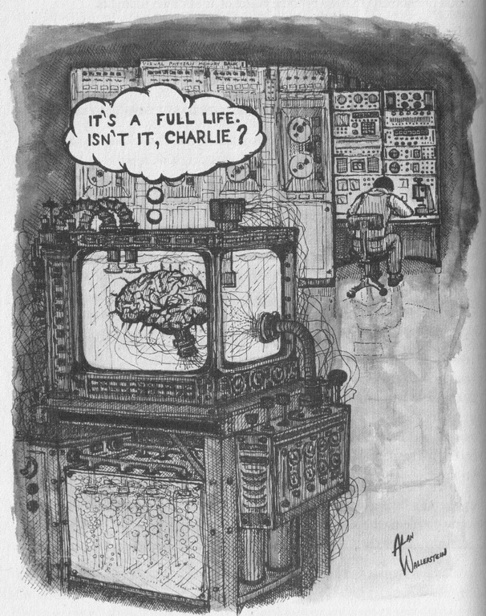

Downloading Your Mind to Your Personal Computer

A more challenging but also ultimately feasible scenario will be to scan someoneʹs brain to map the locations, interconnections, and contents of the somas, axons, dendrites, presynaptic vesicles, and other neural components. Its entire organization could then be re‐created on a neural computer of sufficient capacity, including the contents of its memory.

This is harder in an obvious way than the scanning‐the‐brain‐to‐understand‐it scenario. In the former, we need only sample each region until we understand the salient algorithms. We can then combine those insights with knowledge we already have. In this— scanning the brain to download it—scenario, we need to capture every little detail.

On the other hand, we donʹt need to understand all of it; we need only to literally copy it, connection by connection, synapse by synapse, neurotransmitter by neurotransmitter. It requires us to understand local brain processes, but not necessarily the brainʹs global organization, at least not in full. It is likely that by the time we can do this, we will understand much of it, anyway.

To do this right, we do need to understand what the salient information‐processing mechanisms are. Much of a neuronʹs elaborate structure exists to support its own structural integrity and life processes and does not directly contribute to its handling of information. We know that neuron‐computing process based on hundreds of different neurotransmitters and that different neural mechanisms in different regions allow for different types of computing.

The early vision neurons for example, are good at accentuating sudden color changes to facilitate finding the edges of objects. Hippocampus neurons are likely to have structures for enhancing the long‐term retention of memories. We also know that neurons use a combination of digital and analog computing that needs to be accurately modeled. We

need to identify structures capable of quantum computing, if any. All of the key features that affect information processing need to be recognized if we are to copy them accurately.

How well will this work? Of course, like any new technology, it wonʹt be perfect at first, and initial downloads will be somewhat imprecise. Small imperfections wonʹt necessarily be immediately noticeable because people are always changing to some degree. As our understanding of the mechanisms of the brain improves and our ability to

accurately and noninvasively scan these features improves, reinstantiating (reinstalling) a personʹs brain should alter a personʹs mind no more than it changes from day to day

What Will We Find When We Do This?

We have to consider this question on both the objective and subjective levels. ʺObjectiveʺ means everyone except me, so letʹs start with that. Objectively, when we scan someoneʹs brain and reinstantiate their personal mind file into a suitable computing medium, the newly emergent ʺpersonʺ will appear to other observers to have very much the same

personality, history, and memory as the person originally scanned. Interacting with the newly instantiated person will feel like interacting with the original person. The new person will claim to be that same old person and will have a memory of having been that person, having grown up in Brooklyn, having walked into a scanner here, and woken

up in the machine there. Heʹll say, ʺHey, this technology really works.ʺ

There is the small matter of the ʺnew personʹsʺ body. What kind of body will a reinstantiated personal mind file

have: the original human body, an upgraded body, a synthetic body, a nanoengineered body, a virtual body in a virtual environment? This is an important question, which I will discuss in the next chapter.

Subjectively, the question is more subtle and profound. Is this the same consciousness as the person we just scanned? As we saw in chapter 3, there are strong arguments on both sides. The position that fundamentally we are

our ʺpatternʺ (because our particles are always changing) would argue that this new person is the same because their patterns are essentially identical. The counter argument, however, is the possible continued existence of the person who was originally scanned. If he—Jack—is still around, he will convincingly claim to represent the continuity of his consciousness. He may not be satisfied to let his mental clone carry on in his stead. Weʹll keep bumping into this issue as we explore the twenty‐first century.

But once over the divide, the new person will certainly think that he was the original person. There will be no ambivalence in his mind as to whether or not he committed suicide when he agreed to be transferred into a new computing substrate leaving his old slow carbon‐based neural‐computing machinery behind. To the extent that he wonders at all whether or not he is really the same person that he thinks he is, heʹll be glad that his old self took the plunge, because otherwise he wouldnʹt exist.

Is he—the newly installed mind—conscious? He certainly will claim to be. And being a lot more capable than his

old neural self, heʹll be persuasive and effective in his position. Weʹll believe him. Heʹll get mad if we donʹt.

A Growing Trend

In the second half of the twenty‐first century, there will be a growing trend toward making this leap. Initially, there will be partial porting—replacing aging memory circuits, extending pattern‐recognition and reasoning circuits through neural implants. Ultimately, and well before the twenty‐first century is completed, people will port their entire mind file to the new thinking technology.

There will be nostalgia for our humble carbon‐based roots, but there is nostalgia for vinyl records also. Ultimately, we did copy most of that analog music to the more flexible and capable world of transferable digital information. The leap to port our minds to a more capable computing medium will happen gradually but inexorably nonetheless.

As we port ourselves, we will also vastly extend ourselves. Remember that $1,000 of computing in 2060 will have

the computational capacity of a trillion human brains. So we might as well multiply memory a trillion fold, greatly extend recognition and reasoning abilities, and plug ourselves into the pervasive wireless‐communications network.

While we are at it, we can add all human knowledge—as a readily accessible internal database as well as already processed and learned knowledge using the human type of distributed understanding.

THE AGE OF NEURAL IMPLANT HAS ALREADY ARRIVED

The patients are wheeled in on stretchers. Suffering from an advanced stage of Parkinson's disease, they are

like statues, their muscles frozen, their bodies and faces totally immobile. Then in a dramatic demonstration

at a French clinic, the doctor in charge throws an electrical switch. The patients suddenly come to life, get up,

walk around, and calmly and expressively describe how they have overcome their debilitating symptoms. This

is the dramatic result of a new neural implant therapy that is approved in Europe, and still awaits FDA

approval in the United States.

The diminished levels of the neurotransmitter dopamine in a Parkinson's patient causes overactivation of

two tiny regions in the brain: the ventral posterior nucleus and the subthalmic nucleus. This overactivation in

turn causes the slowness, stiffness, and gait difficulties of the disease, and ultimately results in total paralysis and death. Dr. A. L. Benebid, a French physician at Fourier University in Grenoble, discovered that stimulating

these regions with a permanently implanted electrode paradoxically inhibits these overactive regions and

reverses the symptoms. The electrodes are wired to a small electronic control unit placed in the patient's

chest. Through radio signals, the unit can be programmed, even turned on and off. When switched off, the

symptoms immediately return. The treatment has the promise of controlling the most devastating symptoms

of the disease. [24]

Similar approaches have been used with other brain regions. For example, by implanting an electrode in

the ventral lateral thalamus, the tremors as associated with cerebral palsy, multiple sclerosis, and other

tremor-causing conditions can be suppressed.

"We used to treat the brain like soup, adding chemicals that enhance or suppress certain

neurotransmitters," says Rick Trosch, one of the American physicians helping to perfect "deep brain

stimulation" therapies. "Now we're treating it like circuitry." [25]

Increasingly, we are starting to combat cognitive and sensory afflictions by treating the brain and nervous

system like the complex computational system that it is. Cochlear implants together with electronic speech

processors perform frequency analysis of sound waves, similar to that performed by the inner ear. About 10

percent of the formerly deaf persons who have received this neural replacement device are now able to hear

and understand voices well enough that they can hold conversations using a normal telephone.

Neurologist and ophthalmologist at Harvard Medical School Dr. Joseph Rizzo and his colleagues have

developed an experimental retina implant. Rizzo's neural implant is a small solar-powered computer that

communicates to the optic nerve. The user wears special glasses with tiny television cameras that

communicate to the implanted computer by laser signal. [26]

Researchers at Germany's Max Planck Institute for Biochemistry have developed special silicon devices that

can communicate with neurons in both directions. Directly stimulating neurons with an electrical current is not

the ideal approach since it can cause corrosion to the electrodes and create chemical by-products that damage

the cells. In contrast, the Max Planck Institute devices are capable of triggering an adjacent neuron to fire

without a direct electrical link. The Institute scientists demonstrated their invention by controlling the

movements of a living leech from their computer.

Going in the opposite direction—from neurons to electronics—is a device called a "neuron transistor," [27]

which can detect the firing of a neuron. The scientists hope to apply both technologies to the control of

artificial human limbs by connecting spinal nerves to computerized prostheses. The Institute's Peter Fromherz

says, "These two devices join the two worlds of information processing: the silicon world of the computer and

the water world of the brain."

Neurobiologist Ted Berger and his colleagues at Hedco Neurosciences and Engineering have built integrated

circuits that precisely match the properties and information processing of groups of animal neurons. The chips

exactly mimic the digital and analog characteristics of the neurons they have analyzed. They are currently

scaling up their technology to systems with hundreds of neurons. [28] Professor Carver Mead and his

colleagues at the California Institute of Technology have also built digital-analog integrated circuits that match

the processing of mammalian neural circuits comprising hundreds of neurons. [29]

The age of neural implants is under way, albeit at an early stage. Directly enhancing the information

processing of our brain with synthetic circuits is focusing at first on correcting the glaring defects caused by

neurological and sensory diseases and disabilities. Ultimately we will all find the benefits of extending our

abilities through neural implants difficult to resist.

The New Mortality

Actually there wonʹt be mortality by the end of the twenty‐first century. Not in the sense that we have known it. Not if you take advantage of the twenty‐first centuryʹs brain‐porting technology. Up until now, our mortality was tied to the longevity of our hardware. When the hardware crashed, that was it. For many of our forebears, the hardware gradually deteriorated before it disintegrated. Yeats lamented our dependence on a physical self that was ʺbut a paltry thing, a tattered coat upon a stick.ʺ [30] As we cross the divide to instantiate ourselves into our computational technology, our identity will be based on our evolving mind file. We will be software, not hardware.

And evolve it will. Today, our software cannot grow. It is stuck in a brain of a mere 100 trillion connections and

synapses. But when the hardware is trillions of times more capable, there is no reason for our minds to stay so small.

They can and will grow.

As software, our mortality will no longer be dependent on the survival of the computing circuitry. There will still

be hardware and bodies, but the essence of our identity will switch to the permanence of our software. Just as, today, we donʹt throw our files away when we change personal computers—we transfer them, at least the ones we want to

keep. So, too, we wonʹt throw our mind file away when we periodically port ourselves to the latest, ever more capable, ʺpersonalʺ computer. Of course, computers wonʹt be the discrete objects they are today. They will be deeply embedded in our bodies, brains, and environment. Our identity and survival will ultimately become independent of

the hardware and its survival.

Our immortality will be a matter of being sufficiently careful to make frequent backups. if weʹre careless about this, weʹll have to load an old backup copy and be doomed to repeat our recent past.

‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐

LETʹS JUMP TO THE OTHER SIDE OF THIS COMING CENTURY. YOU SAID THAT BY 2099 A PENNY OF

COMPUTING WILL BE EQUAL TO A BILLION TIMES THE COMPUTING POWER OF ALL HUMAN BRAINS

COMBINED. SOUNDS LIKE HUMAN THINKING IS GOING TO BE PRETTY TRIVIAL.

Unassisted, thatʹs true.

SO HOW WILL WE HUMAN BEINGS FARE IN THE MIDST OF SUCH COMPETITION?

First, we have to recognize that the more powerful technology—the technologically more sophisticated civilization—

always wins. That appears to be what happened when our Homo sapiens sapiens subspecies met the Homo sapiens neanderthalensis and other nonsurviving subspecies of Homo sapiens. That is what happened when the more technologically advanced Europeans encountered the indigenous peoples of the Americas. This is happening today as

the more advanced technology is the key determinant of economic and military power.

SO WEʹRE GOING TO BE SLAVES TO THESE SMART MACHINES?

Slavery is not a fruitful economic system to either side in an age of intellect. We would have no value as slaves to machines. Rather, the relationship is starting out the other way.

ITʹS TRUE THAT MY PERSONAL COMPUTER DOES WHAT I ASK IT TO DO—SOMETIMES! MAYBE I SHOULD

START BEING NICER TO IT.

No, it doesnʹt care how you treat it, not yet. But ultimately our native thinking capacities will be no match for the all-encompassing technology weʹre creating.

MAYBE WE SHOULD STOP CREATING IT.

We canʹt stop. The Law of Accelerating Returns forbids it! Itʹs the only way to keep evolution going at an accelerating pace.

HEY, CALM DOWN. ITʹS FINE WITH ME IF EVOLUTION SLOWS DOWN A TAD. SINCE WHEN HAVE WE

ADOPTED YOUR ACCELERATION LAW AS THE LAW OF THE LAND?

We donʹt have to. Stopping computer technology, or any fruitful technology, would mean repealing basic realities of

economic competition, not to mention our quest for knowledge. Itʹs not going to happen. Furthermore, the road weʹre

going down is a road paved with gold. Itʹs full of benefits that weʹre never going to resist—continued growth in economic prosperity, better health, more intense communication, more effective education, more engaging

entertainment, better sex.

UNTIL THE COMPUTERS TAKE OVER.

Look, this is not an alien invasion. Although it sounds unsettling, the advent of machines with vast intelligence is not necessarily a bad thing.

I GUESS IF WE CANʹT BEAT THEM, WEʹLL HAVE TO JOIN THEM.

Thatʹs exactly what weʹre going to do. Computers started out as extensions of our minds, and they will end up extending our minds. Machines are already an integral part of our civilization, and the sensual and spiritual machines of the twenty‐first century will be an even more intimate part of our civilization.

OKAY, IN TERMS OF EXTENDING MY MIND, LETʹS GET BACK TO IMPLANTS FOR MY FRENCH LIT CLASS. IS

THIS GOING TO BE LIKE IʹVE READ THIS STUFF? OR IS IT JUST GOING TO BE LIKE A SMART PERSONAL

COMPUTER THAT I CAN COMMUNICATE WITH QUICKLY BECAUSE IT HAPPENS TO BE LOCATED IN MY

HEAD?

Thatʹs a key question, and I think it will be controversial. It gets back to the issue of consciousness. Some people will feel that what goes in their neural implants is indeed subsumed by their consciousness. Others will feel that it remains outside of their sense of self. Ultimately, I think that we will regard the mental activity of the implants as part of our own thinking. Consider that even without implants, ideas and thoughts are constantly popping into our heads,

and we have little idea of where they came from, or how they got there. We nonetheless consider all the mental phenomena that we become aware of as our own thoughts.

SO IʹLL BE ABLE TO DOWNLOAD MEMORIES OF EXPERIENCES IʹVE NEVER HAD?

Yes, but someone has probably had the experience. So why not have the ability to share it?

I SUPPOSE FOR SOME EXPERIENCES, IT MIGHT BE SAFER TO JUST DOWNLOAD THE MEMORIES OF IT.

Less time‐consuming also.

DO YOU REALLY THINK THAT SCANNING A FROZEN BRAIN IS FEASIBLE TODAY?

Sure, just stick your head in my freezer here.

GEE, ARE YOU SURE THIS IS SAFE?

Absolutely

WELL, I THINK IʹLL WAIT FOR FDA APPROVAL.

Okay, then youʹll have to wait a long time.

THINKING AHEAD, I STILL HAVE THIS SENSE THAT WEʹRE DOOMED. I MEAN, CAN UNDERSTAND HOW A

NEWLY INSTANTIATED MIND, AS YOU PUT IT, WILL BE HAPPY THAT SHE WAS CREATED AND WILL

THINK THAT SHE HAD BEEN ME PRIOR TO MY HAVING BEEN SCANNED AND IS STILL ME IN A SHINY

NEW BRAIN. SHEʹLL HAVE NO REGRETS AND WILL BE ON THE OTHER SIDE.ʺ BUT I DONʹT SEE HOW I CAN

GET ACROSS THE HUMAN‐MACHINE DIVIDE. AS YOU POINTED OUT, IF IʹM SCANNED, THAT NEW ME

ISNʹT ME BECAUSE IʹM STILL HERE IN MY OLD BRAIN.

Yes, thereʹs a little glitch in this regard. But Iʹm sure weʹll figure how to solve this thorny problem with a little more consideration.

‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐

C H A P T E R S E V E N

. . . AND BODIES

THE IMPORTANCE OF HAVING A BODY

Letʹs start by taking a quick look at my readerʹs diary.

NOW WAIT JUST A MINUTE.

Is there a problem?

FIRST OF ALL, I HAVE A NAME.

Yes, it would be a good idea to introduce you by name at this point.

IʹM MOLLY.

Thank you, is there something else?

YES. IʹM NOT SURE IʹM PREPARED TO SHARE MY DIARY WITH YOUR OTHER READERS.

Most writers donʹt let their readers participate at all. Anyway, youʹre my creation, so I should be able to share your personal reflections if it serves a purpose here.

I MAY BE YOUR CREATION, BUT REMEMBER WHAT YOU SAID IN CHAPTER 2 ABOUT ONEʹS CREATIONS

EVOLVING TO SURPASS THEIR CREATORS.

True enough, so maybe I should be more sensitive to your needs.

GOOD IDEA—LETʹS START BY ALLOWING ME TO VET THOSE ENTRIES YOUʹRE SELECTING.

Very well. Here are some extracts from Mollyʹs diary, suitably edited:

Iʹve switched to nonfat muffins. This has two distinct benefits. First of all, they have half the number of calories. Secondly, they taste awful. That way Iʹm less tempted to eat them. But I wish people would stop

shoving food in my face. . . . Iʹm going to have trouble at this potluck dorm party tomorrow. I feel like I

have to try everything, and I kind of lose track of what Iʹm eating.

Iʹve got to drop at least half a dress size. A full size would be better. Then I could breathe more easily in

this new dress. That reminds me, I should stop at the health club on my way home. Maybe that new

trainer will notice me. Actually I did catch him looking at me, but I was being kind of spastic with those

new machines, and he looked the other way. . . . Iʹm not crazy about the neighborhood this place is in, I

donʹt really feel safe walking back to my car when itʹs late. Okay, hereʹs an idea—Iʹll ask that trainer—got

to get his name—to walk me to my car. Always a good idea to be safe, right?

. . . Iʹm a little nervous about this bump on my toe. But the doctor said that toe bumps are almost always benign. But he still wants to remove it and send it to a lab. He said I wonʹt feel a thing. Except, of

course, for the Novocain—I hate needles!

. . . It was a little strange seeing my old boyfriend, but Iʹm glad weʹre still friends. It did feel good when

he gave me a hug . . .

Thank you, Molly. Now consider: How many of Mollyʹs entries would make sense if she didnʹt have a body? Most

of Mollyʹs mental activities are directed toward her body and its survival, security, nutrition, image, not to mention related issues of affection, sexuality, and reproduction. But Molly is not unique in this regard. I invite my other readers to look at their own diaries. And if you donʹt have one, consider what you would write in it if you did. How many of your entries would make sense if you didnʹt have a body?

Our bodies are important in many ways. Most of those goals I spoke about at the beginning of the previous chapter—the ones we attempt to solve using our intelligence—have to do with our bodies: protecting them, providing

them with fuel, making them attractive, making them feel good, providing for their myriad needs, not to mention desires.

Some philosophers—professional artificial‐intelligence critic Hubert Dreyfus, for one—maintain that achieving human‐level intelligence is impossible without a body. [1] Certainly, if weʹre going to port a humanʹs mind to a new computational medium, weʹd better provide a body. A disembodied mind will quickly get depressed.

‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐‐

TWENTY‐FIRST CENTURY BODIES

What makes a soul? And if machines ever have souls what will be the equivalent of psychoactive drugs? Of pain? Of the physical/emotional high I get from having a clean office?

—Esther Dyson

What a strange machine man is. You fill him with bread, wine, fish, and radishes, and out come sighs, laughter and dreams.

—Nikos Kazantzakis

So what kind of bodies will we provide for our twenty‐first‐century machines? Later on, the question will become: What sort of bodies will they provide for themselves?

Letʹs start with the human body itʹs the body weʹre used to. It evolved along with its brain, so the human brain is

well suited to provide for its needs. The human brain and body kind of go together.

The likely scenario is that both body and brain will evolve together, will become enhanced together, will migrate

together toward new modalities and materials. As I discussed in the previous chapter, porting our brains to new computational mechanisms will not happen all at once. We will enhance our brains gradually through direct connection with machine intelligence until such time that the essence of our thinking has fully migrated to the far more capable and reliable new machinery. Again, if we find this notion troublesome, a lot of this uneasiness has to do with our concept of the word machine. Keep in mind that our concept of this word will evolve along with our minds.

In terms of transforming our bodies, we are already further along in this process than we are in advancing our minds. We have titanium devices to replace our jaws, skulls, and hips. We have artificial skin of various kinds. We have artificial heart valves. We have synthetic vessels to replace arteries and veins, along with expandable stents to provide structural support for weak natural vessels. We have artificial arms, legs, feet, and spinal implants. We have all kinds of joints: jaws, hips, knees, shoulders, elbows, wrists, fingers, and toes. We have implants to control our bladders. We are developing machines—some made of artificial materials, others combining new materials with cultured cells—that will ultimately be able to replace organs such as the liver and pancreas. We have penile prostheses with little pumps to simulate erections. And we have long had implants for teeth and breasts.

Of course, the notion of completely rebuilding our bodies with synthetic materials, even if superior in certain ways, is not immediately compelling. We like the softness of our bodies. We like bodies to be supple and cuddly and

warm. And not a superficial warmth, but the deep and intimate heat drawn from its trillions of living cells.

So letʹs consider enhancing our bodies cell by cell. We have started down that road as well. We have written down

a portion of the entire genetic code that describes our cells, and weʹve started the process of understanding it. In the near future, we hope to design genetic therapies to improve our cells, to correct such defects as the insulin resistance associated with Type II diabetes, and the loss of control over self‐replication associated with cancer. An early method of delivering gene therapies was to infect a patient with special viruses containing the corrective DNA. A more effective method developed by Dr. Clifford Steer at the University of Minnesota utilizes RNA molecules to deliver the desired DNA directly. [2] High on researchersʹ list for future cellular improvements through genetic engineering is to counteract our genes for cellular suicide. These strands of genetic beads, called telomeres, get shorter every time a cell divides. When the telomere beads count down to zero, a cell is no longer able to divide, and destroys itself. Thereʹs a long list of diseases, aging conditions, and limitations that we intend to address by altering the genetic code that controls our cells.

But there is only so far we can go with this approach. Our DNA‐based cells depend on protein synthesis, and while protein is a marvelously diverse substance, it suffers from severe limitations. Hans Moravec, one of the first serious thinkers to realize the potential of twenty‐first‐century machines, points out that ʺprotein is not an ideal material. It is stable only in a narrow temperature and pressure range, is very sensitive to radiation, and rules out many construction techniques and components. . . . A genetically engineered superhuman would be just a second‐rate

kind of robot, designed under the handicap that its construction can only be by DNA‐guided protein synthesis. Only

in the eyes of human chauvinists would it have an advantage.ʺ [3]

One of evolutionʹs ideas that is worth keeping, however, is building our bodies from cells. This approach would

retain many of our bodiesʹ beneficial qualities: redundancy, which provides a high degree of reliability; the ability to regenerate and repair itself; and softness and warmth. But just as we will eventually relinquish the extremely slow speed of our neurons, we will ultimately be forced to abandon the other restrictions of our protein‐based chemistry.

To reinvent our cells, we look to one of the twenty‐first centuryʹs primary technologies: nanotechnology.

NANOTECHNOLOGY:

REBUILDING THE WORLD, ATOM BY ATOM

The problems of chemistry and biology can be greatly helped if . . . doing things on an atomic level is ultimately developed—a development which I think cannot be avoided.

—Richard Feynman, 1959

Suppose someone claimed to have a microscopically exact replica (in marble, even) of Michelangeloʹs David in his home. When you go to see this marvel, you find a twenty‐foot‐tall, roughly rectilinear hunk of pure white marble standing in his living room. ʺI havenʹt gotten around to unpacking it yet,ʺ he says, ʺbut I know itʹs in there.ʺ

—Douglas Hofstadter

What advantages will nanotoasters have over conventional macroscopic toaster technology? First, the savings in counter space will be substantial. One philosophical point that must not be overlooked is that the creation of the worldʹs smallest toaster implies the existence of the worldʹs smallest slice of bread. In the quantum limit we must necessarily encounter fundamental toast particles, which we designate here as croutons.

—Jim Cser, Annals of Improbable Research, edited by Marc Abrahams

Humankindʹs first tools were found objects: sticks used to dig up roots and stones used to break open nuts. It took our forebears tens of thousands of years to invent a sharp blade. Today we build machines with finely designed intricate mechanisms, but viewed on an atomic scale, our technology is still crude. ʺCasting, grinding, milling, and even lithography move atoms in great thundering statistical herds,ʺ says Ralph Merkle, a leading nanotechnology theorist at Xeroxʹs Palo Alto Research Center. He adds that current manufacturing methods are ʺlike trying to make

things out of Legos with boxing gloves on. . . . In the future, nanotechnology will let us take off the boxing gloves.ʺ [4]

Nanotechnology is technology built on the atomic level: building machines one atom at a time. ʺNanoʺ refers to a

billionths of a meter, which is the width of five carbon atoms. We have one existence proof of the feasibility of nanotechnology: life on Earth. Little machines in our cells called ribosomes build organisms such as humans one molecule, that is one amino acid, at a time, following digital templates coded in another molecule called DNA. Life on Earth has mastered the ultimate goal of nanotechnology, which is self‐replication.

But as mentioned above, Earthly life is limited by the particular molecular building block it has selected. Just as

our human‐created computational technology will ultimately exceed the capacity of natural computation (electronic

circuits are already millions of times faster than human neural circuits), our twenty‐first‐century physical technology will also greatly exceed the capabilities of the amino acid‐based nanotechnology of the natural world.

The concept of building machines atom by atom was first described in a 1959 talk at Cal Tech titled ʺThereʹs Plenty

of Room at the Bottom,ʺ by physicist Richard Feynman, the same guy who first suggested the possibility of quantum

computing. [5] The idea was developed in some detail by Eric Drexler twenty years later in his book Engines of Creation. [6] The book actually inspired the cryonics movement of the 1980s, in which people had their heads (with or without bodies) frozen in the hope that a future time would possess the molecule‐scale technology to overcome their

mortal diseases, as well as undo the effects of freezing and defrosting. Whether a future generation would be motivated to revive all these frozen brains was another matter.

After publication of Engines of Creation, the response to Drexlerʹs ideas was skeptical and he had difficulty filling out his MIT Ph.D. committee despite Marvin Minskyʹs agreement to supervise it. Drexlerʹs dissertation, published in

1992 as a book titled Nanosystems: Molecular Machinery, Manufacturing, and Computation, provided a comprehensive proof of concept, including detailed analyses and specific designs. [7] A year later, the first nanotechnology conference attracted only a few dozen researchers. The fifth annual conference, held in December 1997, boasted 350

scientists who were far more confident of the practicality of their tiny projects. Nanothinc, an industry think tank, estimated in 1997 that the field already produces $5 billion in annual revenues for nanotechnology‐related technologies, including micromachines, microfabrication techniques, nanolithography, nanoscale microscopes, and others. This figure has been more than doubling each year. [8]

The Age of Nanotubes

One key building material for tiny machines is, again, nanotubes. Although built on an atomic scale, the hexagonal patterns of carbon atoms are extremely strong and durable. ʺYou can do anything you damn well want with these tubes and theyʹll just keep on truckinʹ,ʺ says Richard Smalley, one of the chemists who received the Nobel Prize for discovering the buckyball molecule. [9] A car made of nanotubes would be stronger and more stable than a car made

with steel, but would weigh only fifty pounds. A spacecraft made of nanotubes could be of the size and strength of

the U.S. space shuttle, but weigh no more than a conventional car. Nanotubes handle heat extremely well, far better

than the fragile amino acids that people are built out of. They can be assembled into all kinds of shapes: wirelike strands, sturdy girders, gears, etcetera. Nanotubes are formed of carbon atoms, which are in plentiful supply in the natural world.

As I mentioned earlier, the same nanotubes can be used for extremely efficient computation, so both the structural

and computational technology of the twenty‐first century will likely be constructed from the same stuff. In fact, the same nanotubes used to form physical structures can also be used for computation, so future nanomachines can have

their brains distributed throughout their bodies.